FireWire

Cadet

- Joined

- Aug 10, 2023

- Messages

- 4

Hello,

Over the past day or so we have been working on moving a handful of fairly large virtual machines from one Proxmox node to another separate cluster. All virtual machines were running off a TrueNas scale NFS share running on the below specs and needed to be moved to a Ceph cluster running on the Proxmox cluster.The first couple of virtual machines moved over fast and pretty quick at about 200mb/s, however once we got about 500gb into the transfer everything slowed down to about 10mb/s. Figured maybe the ZFS cache was filled up, but no its sitting at 62gb of the 112gb usable (see below arcstat). Maybe disk IO was saturated? Nope, all disks were only at 125kb/s for read. Ran a couple DD commands to test writing and reading to the pool and got 1.2gb/s write and 533mb/s read. iperf3 inbound and outbound from the all nodes to the TrueNas system was at 1gb (theres a hop between the two switches thats limited to 1gb). I honestly have no idea what else to test or why this is even happening at all. Any help would be appreciated.

TrueNas Scale Version: TrueNAS-SCALE-22.12.0

Server: R740XD

CPU: 2x Xeon Gold 6134

RAM: 128GB

Boot Array: 2x 400GB intel SSD

HDD: 24x 2.4TB Seagate SAS disks

Network: 6x RJ45 1GB

Pool

------

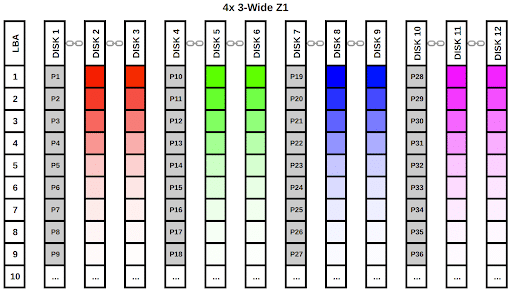

Data VDEVS: RaidZ2; 20 wide

Dedup VDEVS: 1x mirror; 2 wide

Spare VDEVS: 2

Arcstat

---------

admin@truenas2[~]# arcstat

time read miss miss% dmis dm% pmis pm% mmis mm% size c avail

13:30:17 0 0 0 0 0 0 0 0 0 62G 62G 49G

Over the past day or so we have been working on moving a handful of fairly large virtual machines from one Proxmox node to another separate cluster. All virtual machines were running off a TrueNas scale NFS share running on the below specs and needed to be moved to a Ceph cluster running on the Proxmox cluster.The first couple of virtual machines moved over fast and pretty quick at about 200mb/s, however once we got about 500gb into the transfer everything slowed down to about 10mb/s. Figured maybe the ZFS cache was filled up, but no its sitting at 62gb of the 112gb usable (see below arcstat). Maybe disk IO was saturated? Nope, all disks were only at 125kb/s for read. Ran a couple DD commands to test writing and reading to the pool and got 1.2gb/s write and 533mb/s read. iperf3 inbound and outbound from the all nodes to the TrueNas system was at 1gb (theres a hop between the two switches thats limited to 1gb). I honestly have no idea what else to test or why this is even happening at all. Any help would be appreciated.

TrueNas Scale Version: TrueNAS-SCALE-22.12.0

Server: R740XD

CPU: 2x Xeon Gold 6134

RAM: 128GB

Boot Array: 2x 400GB intel SSD

HDD: 24x 2.4TB Seagate SAS disks

Network: 6x RJ45 1GB

Pool

------

Data VDEVS: RaidZ2; 20 wide

Dedup VDEVS: 1x mirror; 2 wide

Spare VDEVS: 2

Arcstat

---------

admin@truenas2[~]# arcstat

time read miss miss% dmis dm% pmis pm% mmis mm% size c avail

13:30:17 0 0 0 0 0 0 0 0 0 62G 62G 49G