Was having a conversation with

@jgreco in another thread that strayed towards a sidebar about NVME name spaces.

I have a vSphere cluster that uses TrueNAS-SCALE and iSCSI as the storage backend, and usually I have 90 to 150 windows virtual machines running in this vSphere cluster. Before this problem occurred, it took me about 2 minutes to clone a 60 GB VM in vCenter.However, a few days ago, the time to...

www.truenas.com

He linked me to an old thread from

@HoneyBadger https://www.truenas.com/community/threads/slog-underprovisioning.38374/

Which got some wheels turning when I saw this graph from Micron:

It's a fairly well known fact that NVME drives generally perform better at Queue-Depths greater than 1, and Micron's testing of creating multiple namespaces and scaling a workload out to 8 of them for whatever marketing application they used demonstrates that.

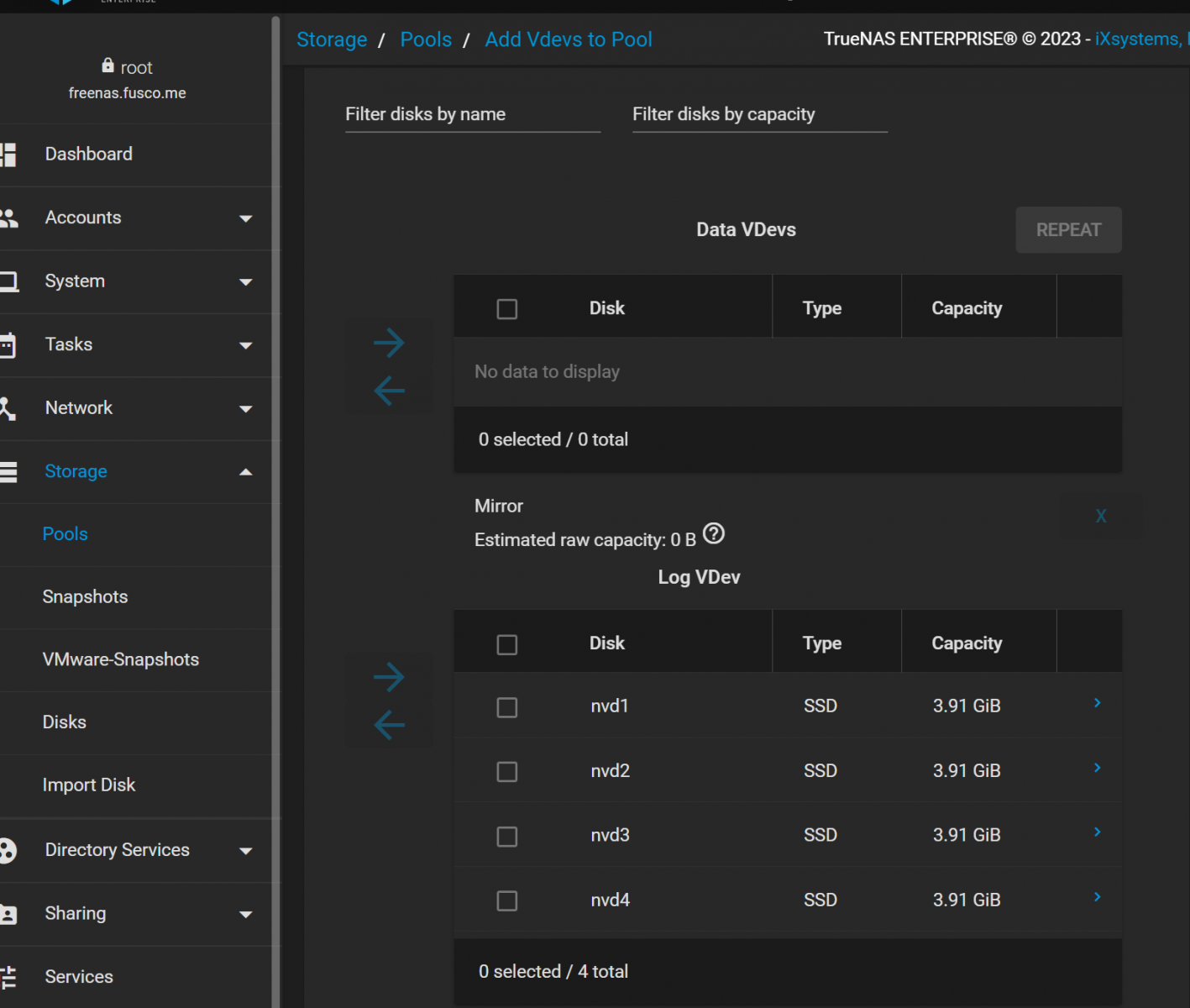

Would this be useful for a SLOG? I created 4 (4GB) namespaces on a 1.6TB SAMSUNG MZWLL1T6HEHP-00003 (PM1725A).

Man Page:

man.freebsd.org

Code:

root@freenas[~]# nvmecontrol ns create -s 8192000 -c 8192000 -n 0 -L 0 -d 0 nvme1

namespace 1 created

root@freenas[~]# nvmecontrol ns create -s 8192000 -c 8192000 -n 1 -L 0 -d 0 nvme1

namespace 2 created

root@freenas[~]# nvmecontrol ns create -s 8192000 -c 8192000 -n 1 -L 0 -d 0 nvme1

namespace 3 created

root@freenas[~]# nvmecontrol ns create -s 8192000 -c 8192000 -n 1 -L 0 -d 0 nvme1

namespace 4 created

root@freenas[~]# nvmecontrol ns attach -n 2 nvme1

namespace 2 attached

root@freenas[~]# nvmecontrol ns attach -n 3 nvme1

namespace 3 attached

root@freenas[~]# nvmecontrol ns attach -n 4 nvme1

(don't ask me why 8192000= 4 Gig, I have no idea, I was just plugging numbers...)

I can see them now when I do a nvmecontrol devlist. I had to do a reboot for the UI to see them, I'd imagine it has something to do with glabel but I was too lazy to dig any deeper.

Code:

root@freenas[~]# nvmecontrol devlist

nvme0: INTEL SSDPEK1A058GA

nvme0ns1 (56245MB)

nvme1: SAMSUNG MZWLL1T6HEHP-00003

nvme1ns1 (4000MB)

nvme1ns2 (4000MB)

nvme1ns3 (4000MB)

nvme1ns4 (4000MB)

Code:

root@freenas[~]# zpool list -v

NAME SIZE ALLOC FREE CK POINT EXPANDSZ FRAG CAP DEDUP HEALTH ALTROOT

freenas-boot 111G 3.50G 108G - - 0% 3% 1.00x ONLINE -

da24p2 112G 3.50G 108G - - 0% 3.14% - ONLINE

test 87T 676G 86.3T - - 0% 0% 1.00x ONLINE /mnt

mirror-0 7.25T 56.2G 7.20T - - 0% 0.75% - ONLINE

gptid/4b89ccb5-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

gptid/4bf9b5a4-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

mirror-1 7.25T 56.3G 7.20T - - 0% 0.75% - ONLINE

gptid/4a55d9e3-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

gptid/4a63c5f1-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

mirror-2 7.25T 56.3G 7.20T - - 0% 0.75% - ONLINE

gptid/491da9f0-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

gptid/4be8eb99-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

mirror-3 7.25T 56.2G 7.20T - - 0% 0.75% - ONLINE

gptid/4a9f9c8b-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

gptid/4b9cf351-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

mirror-4 7.25T 56.1G 7.20T - - 0% 0.75% - ONLINE

gptid/4b7518a5-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

gptid/4b5e6c08-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

mirror-5 7.25T 56.2G 7.20T - - 0% 0.75% - ONLINE

gptid/4904fd2e-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

gptid/4ac53ef7-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

mirror-6 7.25T 56.4G 7.19T - - 0% 0.76% - ONLINE

gptid/495734c2-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

gptid/4bb00352-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

mirror-7 7.25T 56.4G 7.19T - - 0% 0.75% - ONLINE

gptid/4b6e4069-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

gptid/4b3d7786-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

mirror-8 7.25T 56.4G 7.19T - - 0% 0.76% - ONLINE

gptid/4e436d52-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

gptid/4f69d0d5-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

mirror-9 7.25T 56.4G 7.19T - - 0% 0.75% - ONLINE

gptid/4e68a61a-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

gptid/4f1cf63b-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

mirror-10 7.25T 56.9G 7.19T - - 0% 0.76% - ONLINE

gptid/4f63b958-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

gptid/4f801d45-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

mirror-11 7.25T 56.5G 7.19T - - 0% 0.76% - ONLINE

gptid/4f8ed2aa-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

gptid/4fe8597c-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

logs - - - - - - - - -

gptid/091e0445-fe98-11ed-8574-ac1f6b566eea 3.91G 0 3.75G - - 0% 0.00% - ONLINE

gptid/09201b82-fe98-11ed-8574-ac1f6b566eea 3.91G 0 3.75G - - 0% 0.00% - ONLINE

gptid/092a14ee-fe98-11ed-8574-ac1f6b566eea 3.91G 0 3.75G - - 0% 0.00% - ONLINE

gptid/0921a9dc-fe98-11ed-8574-ac1f6b566eea 3.91G 0 3.75G - - 0% 0.00% - ONLINE

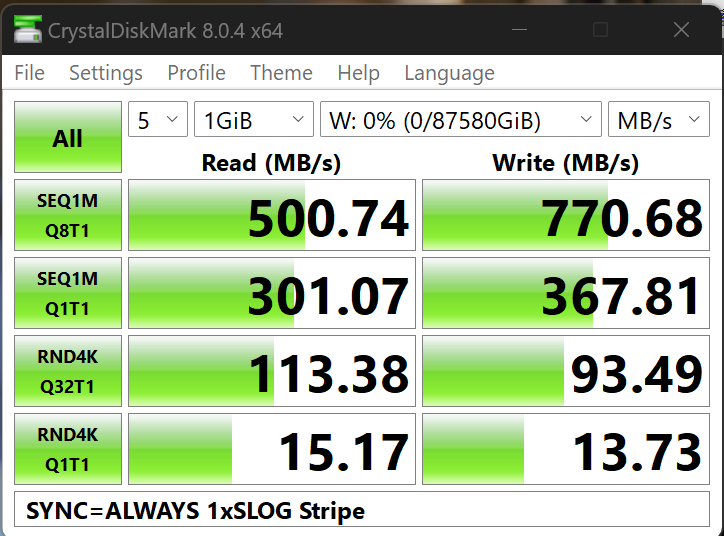

Super

quick and dirty benching. I mounted a Samba share to my workstation (10G),

and set SYNC=ALWAYS.

I am all ears on a better testing methodology also, a

ny idea how I might use diskinfo to to test across multiple devices in a meaningful way to compare to a single NVME namespace?

Running CrystalDiskMark with the above pool layout I got the following:

I ran the same test after removing

3 of the 4 NVME Namespaces from the pool.

Code:

root@freenas[~]# zpool list -v

NAME SIZE ALLOC FREE CKPOINT EXPANDSZ FRAG CAP DEDUP HEALTH ALTROOT

freenas-boot 111G 3.50G 108G - - 0% 3% 1.00x ONLINE -

da24p2 112G 3.50G 108G - - 0% 3.14% - ONLINE

test 87T 678G 86.3T - - 0% 0% 1.00x ONLINE /mnt

mirror-0 7.25T 56.4G 7.19T - - 0% 0.75% - ONLINE

gptid/4b89ccb5-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

gptid/4bf9b5a4-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

mirror-1 7.25T 56.5G 7.19T - - 0% 0.76% - ONLINE

gptid/4a55d9e3-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

gptid/4a63c5f1-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

mirror-2 7.25T 56.4G 7.19T - - 0% 0.75% - ONLINE

gptid/491da9f0-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

gptid/4be8eb99-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

mirror-3 7.25T 56.3G 7.20T - - 0% 0.75% - ONLINE

gptid/4a9f9c8b-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

gptid/4b9cf351-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

mirror-4 7.25T 56.2G 7.20T - - 0% 0.75% - ONLINE

gptid/4b7518a5-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

gptid/4b5e6c08-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

mirror-5 7.25T 56.4G 7.19T - - 0% 0.75% - ONLINE

gptid/4904fd2e-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

gptid/4ac53ef7-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

mirror-6 7.25T 56.6G 7.19T - - 0% 0.76% - ONLINE

gptid/495734c2-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

gptid/4bb00352-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

mirror-7 7.25T 56.5G 7.19T - - 0% 0.76% - ONLINE

gptid/4b6e4069-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

gptid/4b3d7786-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

mirror-8 7.25T 56.6G 7.19T - - 0% 0.76% - ONLINE

gptid/4e436d52-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

gptid/4f69d0d5-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

mirror-9 7.25T 56.6G 7.19T - - 0% 0.76% - ONLINE

gptid/4e68a61a-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

gptid/4f1cf63b-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

mirror-10 7.25T 57.1G 7.19T - - 0% 0.76% - ONLINE

gptid/4f63b958-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

gptid/4f801d45-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

mirror-11 7.25T 56.6G 7.19T - - 0% 0.76% - ONLINE

gptid/4f8ed2aa-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

gptid/4fe8597c-fbe4-11ed-ac09-ac1f6b566eea 7.26T - - - - - - - ONLINE

logs - - - - - - - - -

gptid/091e0445-fe98-11ed-8574-ac1f6b566eea 3.91G 3.32G 439M - - 0% 88.6% - ONLINE

root@freenas[~]#

FWIW there is currently nothing else talking to this (or running on) server:

Code:

root@freenas[~]# netstat | grep microsoft

tcp4 0 8360 10.69.40.19.microsoft- bartowski.50073 ESTABLISHED