I posted a few days ago about high latencies with new WD 6TB drives (WD60EZAZ) and got a lesson about SMR. Unfortunately, I'm in the boat now, so I need to make the best of the situation.

In the middle of resilvering drive #6 of 7 in my raidz2 array, I'm seeing what I would consider truly absurd behavior. Read latencies are in the >500ms range, and I've seen them as high as 1300ms. Queue lengths are in to 60-70 ops range.

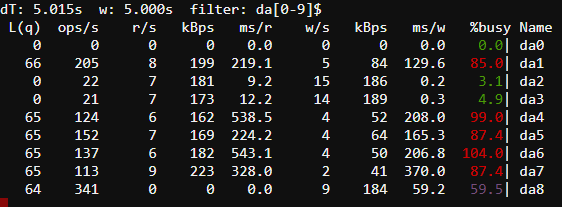

In the gstat output below, da0 is my boot SSD, da2 and da3 are the old 3TB PMR drives, da8 is the resilvering target, and the rest are new drives that have already been resilvered.

Is this just a pathological case of encountering lots of small files, resulting in a pile of tiny writes that are backing up due to having to rewrite full tracks? In this very same resilver, I've seen speeds as high as 50MB/s, which I assume were multi-GB files like VMDKs. This seems like one area in which a traditional track-by-track RAID6 implementation would have an advantage over raidz2. Is it possible to force that behavior in zfs? (Too late for this array, but might be an interesting idea for future SMR-based arrays.)

On another note, the gstat output doesn't make a lot of sense; shouldn't the ops/s equal the sum of r/s and w/s? That's off by more than a factor of 10 in this output.

In the middle of resilvering drive #6 of 7 in my raidz2 array, I'm seeing what I would consider truly absurd behavior. Read latencies are in the >500ms range, and I've seen them as high as 1300ms. Queue lengths are in to 60-70 ops range.

In the gstat output below, da0 is my boot SSD, da2 and da3 are the old 3TB PMR drives, da8 is the resilvering target, and the rest are new drives that have already been resilvered.

Is this just a pathological case of encountering lots of small files, resulting in a pile of tiny writes that are backing up due to having to rewrite full tracks? In this very same resilver, I've seen speeds as high as 50MB/s, which I assume were multi-GB files like VMDKs. This seems like one area in which a traditional track-by-track RAID6 implementation would have an advantage over raidz2. Is it possible to force that behavior in zfs? (Too late for this array, but might be an interesting idea for future SMR-based arrays.)

On another note, the gstat output doesn't make a lot of sense; shouldn't the ops/s equal the sum of r/s and w/s? That's off by more than a factor of 10 in this output.