doutatsu

Dabbler

- Joined

- May 26, 2018

- Messages

- 13

Hello everyone o/

I've had my FreeNAS system for a while, with a RAIDZ1 setup of 3 WD Reds each of 2TB. As I was running out of storage, I wanted to replace disks one by one to grow the pool, following the guide here. Now, because I didn't have any more open SATAs on the machine, I used an external enclosure to connect the drive through a USB3. All I went good, I used the replace drive through the GUI, went through resilver and saw my pool green, with the replaced drive.

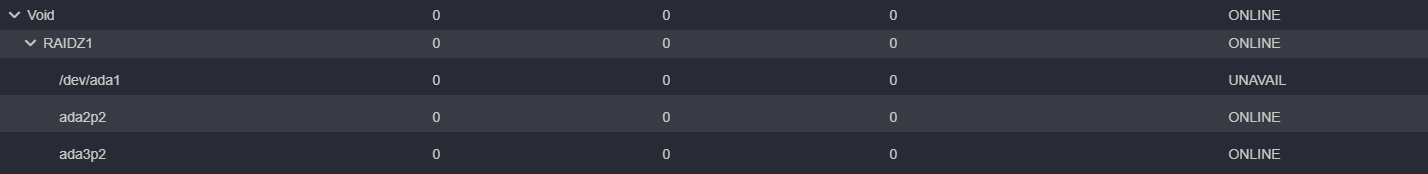

The issue came when I went to remove the old disk, and then put the disk from the enclosure inside the NAS. After turning on the system again, my disk was unavailable and the pool degraded... This is how the Pool Status looked:

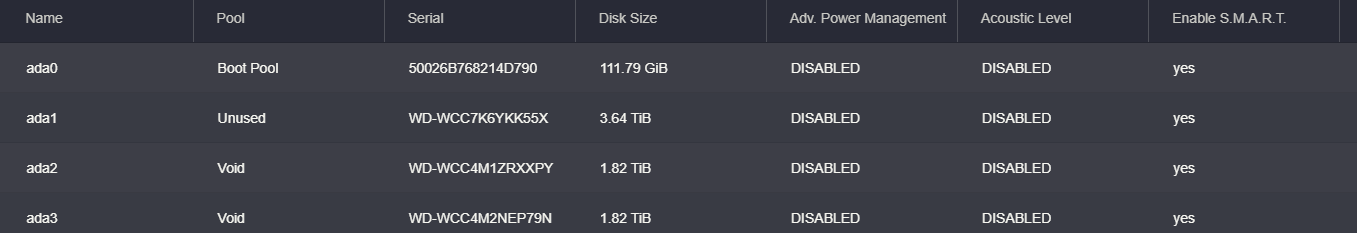

And then looking into disks:

So after trying a couple of commands, checking the zpool status through ssh, I got to this error:

Looking more into this, it seems that guid wasn't assigned to the new drive, hence it can't be just moved across? This is where I am unsure of what is going on and how to fix this. I tried exporting and importing the pool, as some suggested, to no avail. I think I can manually label the drive through bash, but that did sound like the right thing to do. I would really appreciate help with this

I've had my FreeNAS system for a while, with a RAIDZ1 setup of 3 WD Reds each of 2TB. As I was running out of storage, I wanted to replace disks one by one to grow the pool, following the guide here. Now, because I didn't have any more open SATAs on the machine, I used an external enclosure to connect the drive through a USB3. All I went good, I used the replace drive through the GUI, went through resilver and saw my pool green, with the replaced drive.

The issue came when I went to remove the old disk, and then put the disk from the enclosure inside the NAS. After turning on the system again, my disk was unavailable and the pool degraded... This is how the Pool Status looked:

And then looking into disks:

So after trying a couple of commands, checking the zpool status through ssh, I got to this error:

One or more devices could not be used because the label is missing or invalid

Looking more into this, it seems that guid wasn't assigned to the new drive, hence it can't be just moved across? This is where I am unsure of what is going on and how to fix this. I tried exporting and importing the pool, as some suggested, to no avail. I think I can manually label the drive through bash, but that did sound like the right thing to do. I would really appreciate help with this