I am in the process of migrating to TrueNAS Scale on a system that started as FreeNAS and is currently TrueNAS Core. The process involves discontinuing the pools that were using FreeBSD's GELI (Legacy Encryption) and migrating the data to new pools using ZFS encryption. Two pools of media merged into one, but I'm having trouble with the last pool. It contains all the user data, normally accessed over SMB from various PCs.

Basically what I've done is create a new pool with ZFS encryption using disks that are identical to the old ones (which had their data migrated off to new drives already). I will probably replace these within another year, but for now I'm just trying to remove the GELI encryption. So I have two pools on four identical HDDs that I'm working with at the moment:

1) Mirror of two 4TB WD Red drives (Model WD40EFRX-68W) - using Legacy encryption

2) Mirror of two 4TB WD Red drives (Model WD40EFRX-68W) - using ZFS encryption

Steps leading up to this issue:

1) I used rsync to copy the files to the new pool.

2) I used rsync again to verify the data using the --checksum option.

3) A month passed. I switched the Samba config to use the new data sets (new pool). But I repeated steps 1 and 2 to pull in data that was written to the old pool. This is where the problem enters.

4) During the second verify (rsync --checksum), one of the drives in the new pool had a read error, ZFS fixed it, and I decided to do a scrub to see if everything was really okay. The scrub starts out fast, but around 52% it suddenly slows way down.

After 12 hours, I stopped the scrub and restarted it. This morning it was 54% with 8 hours to go (and climbing). There are no errors in the log and what really puzzles me is the previous pool (with GELI) can scrub in 8 hours total.

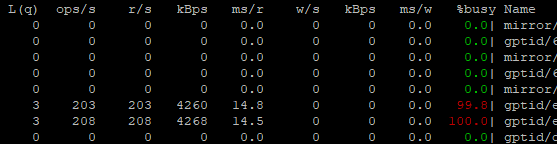

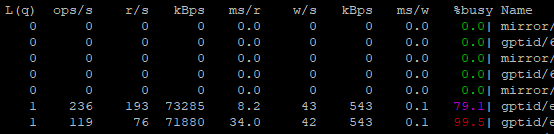

At the time of this post, the new pool's srub it is 58% complete with 8.5 hrs to go, no errors reported, and all morning long the read rate on both drives has been a steady 4 to 6 MB/s with ~200 IOP with 98-100% busy on both drives. However, "gstat -f /" just a moment ago became reporting now the scrub is running at 80-90MB/s. I haven't changed anything while writing this post, but unless it slows down again it is on track for finishing within a couple hours now.

Before

Now

All of the drives have passed the short SMART test, but I haven't run the long one yet because the of the scrub running. But I'm posting because this is all very confusing for me. The only difference is the encryption used, one on the entire disk and the other integrated into ZFS. The hardware and data are the same, and my system isn't reporting errors besides that single read error yesterday (which could have been an event not caused by the HDD itself).

Can there be an issue with how the scrub processes encrypted data vs unencrypted (since ZFS sees GELI as unencrypted)? Could the speed differences be found in the size of files, maybe the slow parts are happening on small files? Overall I'm nervous the hardware is bad, although I only have one read error (ever), but perhaps more importantly I'm wondering if I configured the new pool wrong and this is the only time I can fix it.

Basically what I've done is create a new pool with ZFS encryption using disks that are identical to the old ones (which had their data migrated off to new drives already). I will probably replace these within another year, but for now I'm just trying to remove the GELI encryption. So I have two pools on four identical HDDs that I'm working with at the moment:

1) Mirror of two 4TB WD Red drives (Model WD40EFRX-68W) - using Legacy encryption

2) Mirror of two 4TB WD Red drives (Model WD40EFRX-68W) - using ZFS encryption

Steps leading up to this issue:

1) I used rsync to copy the files to the new pool.

2) I used rsync again to verify the data using the --checksum option.

3) A month passed. I switched the Samba config to use the new data sets (new pool). But I repeated steps 1 and 2 to pull in data that was written to the old pool. This is where the problem enters.

4) During the second verify (rsync --checksum), one of the drives in the new pool had a read error, ZFS fixed it, and I decided to do a scrub to see if everything was really okay. The scrub starts out fast, but around 52% it suddenly slows way down.

After 12 hours, I stopped the scrub and restarted it. This morning it was 54% with 8 hours to go (and climbing). There are no errors in the log and what really puzzles me is the previous pool (with GELI) can scrub in 8 hours total.

At the time of this post, the new pool's srub it is 58% complete with 8.5 hrs to go, no errors reported, and all morning long the read rate on both drives has been a steady 4 to 6 MB/s with ~200 IOP with 98-100% busy on both drives. However, "gstat -f /" just a moment ago became reporting now the scrub is running at 80-90MB/s. I haven't changed anything while writing this post, but unless it slows down again it is on track for finishing within a couple hours now.

Before

Now

All of the drives have passed the short SMART test, but I haven't run the long one yet because the of the scrub running. But I'm posting because this is all very confusing for me. The only difference is the encryption used, one on the entire disk and the other integrated into ZFS. The hardware and data are the same, and my system isn't reporting errors besides that single read error yesterday (which could have been an event not caused by the HDD itself).

Can there be an issue with how the scrub processes encrypted data vs unencrypted (since ZFS sees GELI as unencrypted)? Could the speed differences be found in the size of files, maybe the slow parts are happening on small files? Overall I'm nervous the hardware is bad, although I only have one read error (ever), but perhaps more importantly I'm wondering if I configured the new pool wrong and this is the only time I can fix it.