jordanthompson

Patron

- Joined

- Mar 5, 2022

- Messages

- 224

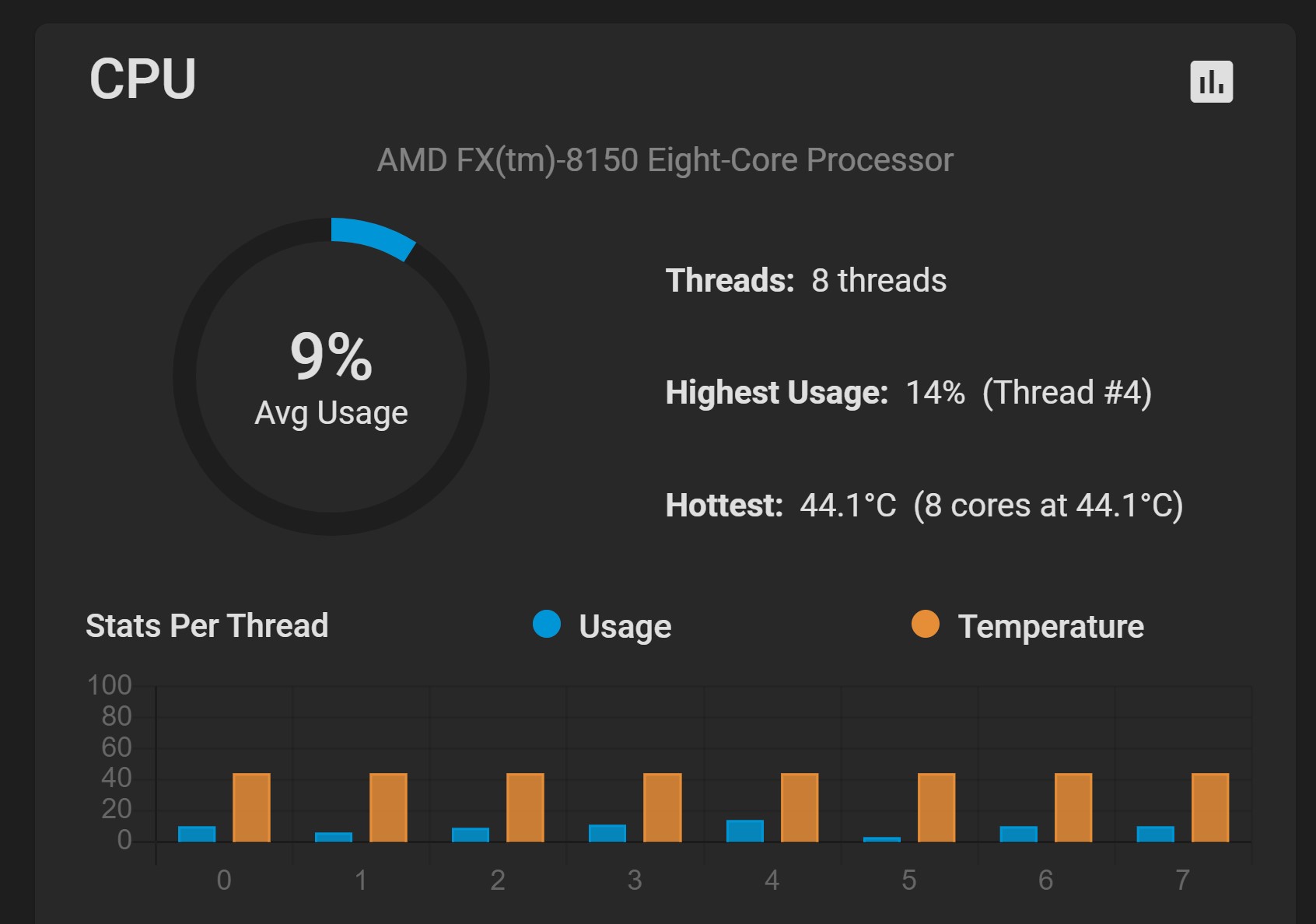

I am new to TrueNAS so this server is only a few days old:

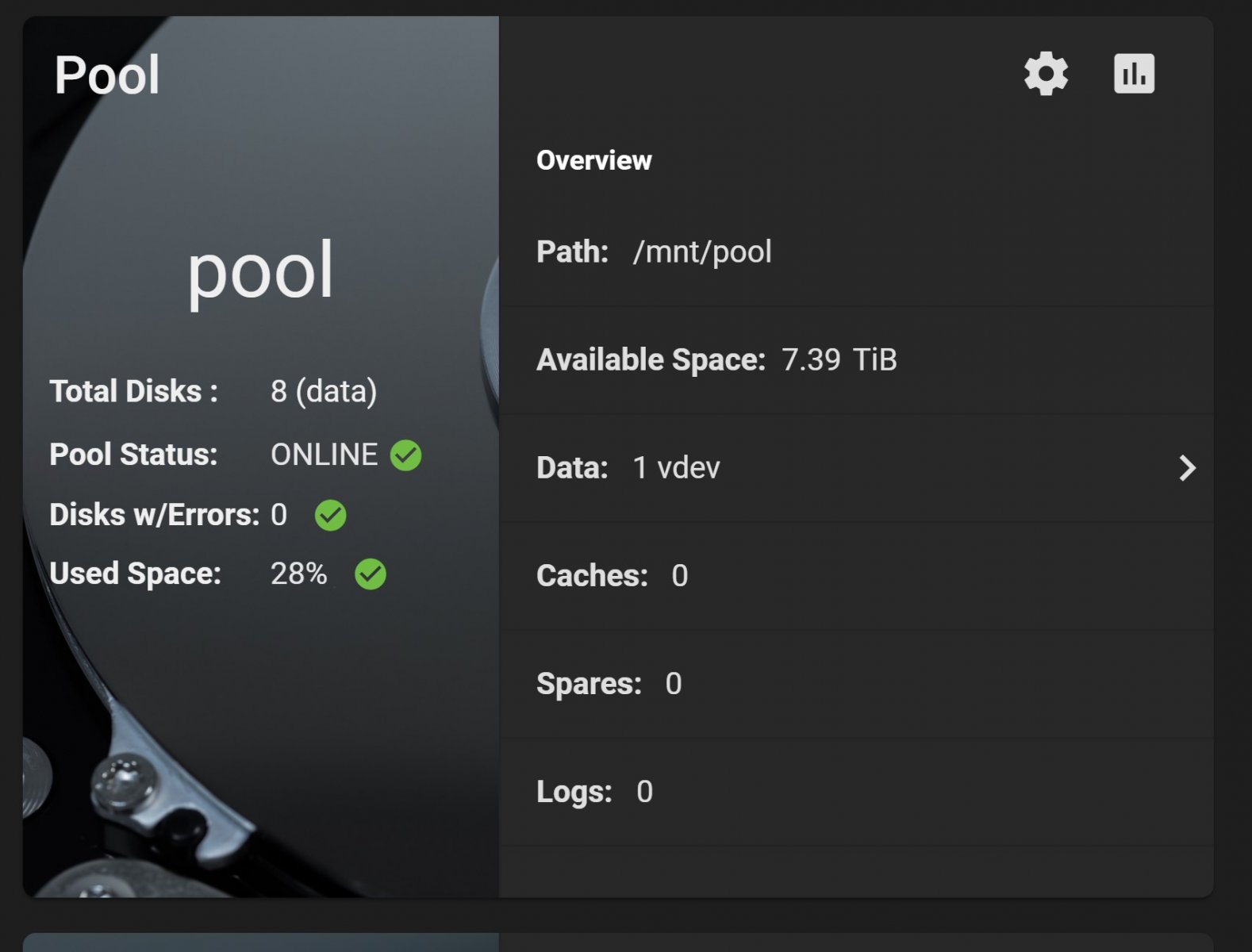

16 X 2TB drives (RAID Z2)

2 X SSD (mirrored for boot)

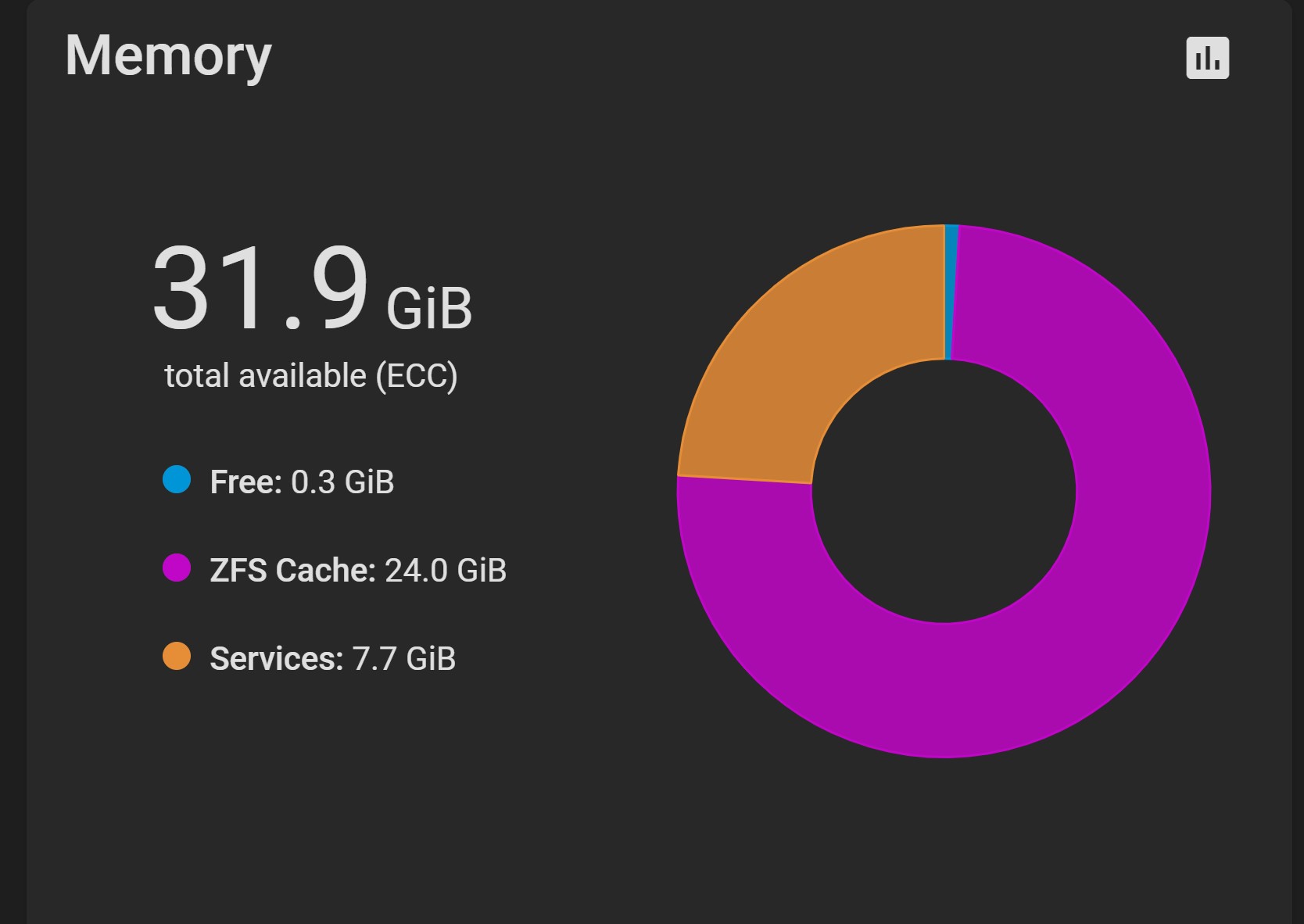

32 GB RAM

I have a VM running ubuntu desktop with 4GB RAM (it is stopped)

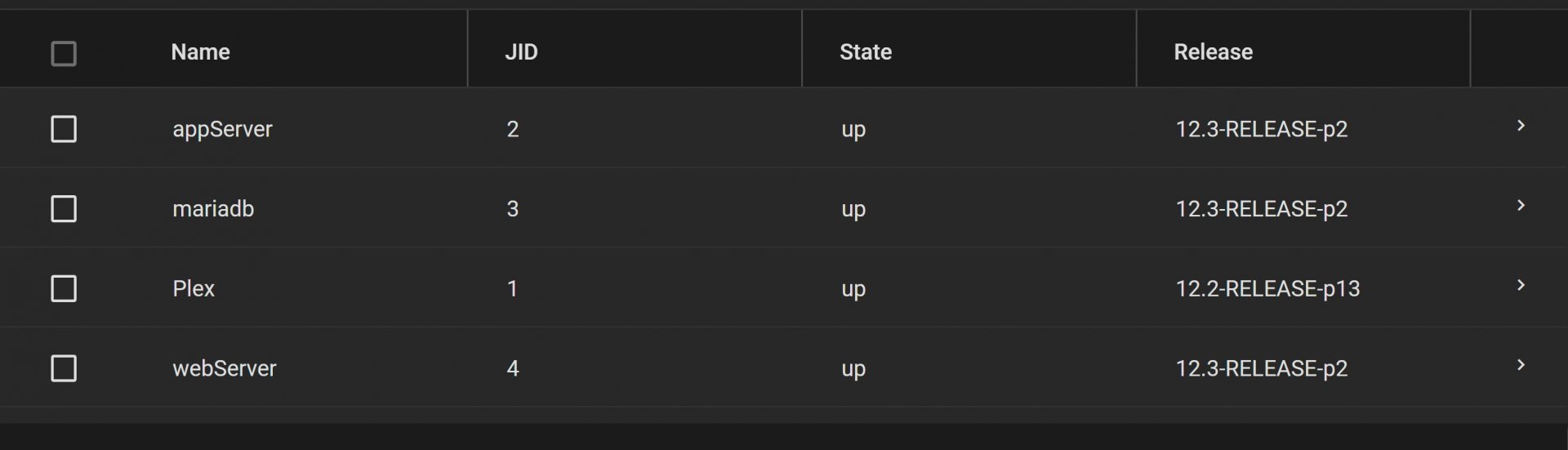

I have 4 jails:

* Plex (very little activity)

* MariaDB (not using it yet, but it is up and running)

* apache (just says hello ;-)

* general purpose that will eventually run scripts via cron

I am getting literally hundreds of these messages per minute.

I added 16 GB RAM a few days ago (total of 32 GB now)

I've been running a backup script (uses rsync) from the console for a couple of days (starting it manually on boots)

These errors started last night after I added an Ubuntu VM. I gave it 4 GB RAM but it has been shut down all night after I first started seeing these errors

I am/have been backing up a large part of the pool to a USB drive. Is this going to make the backup suspect?

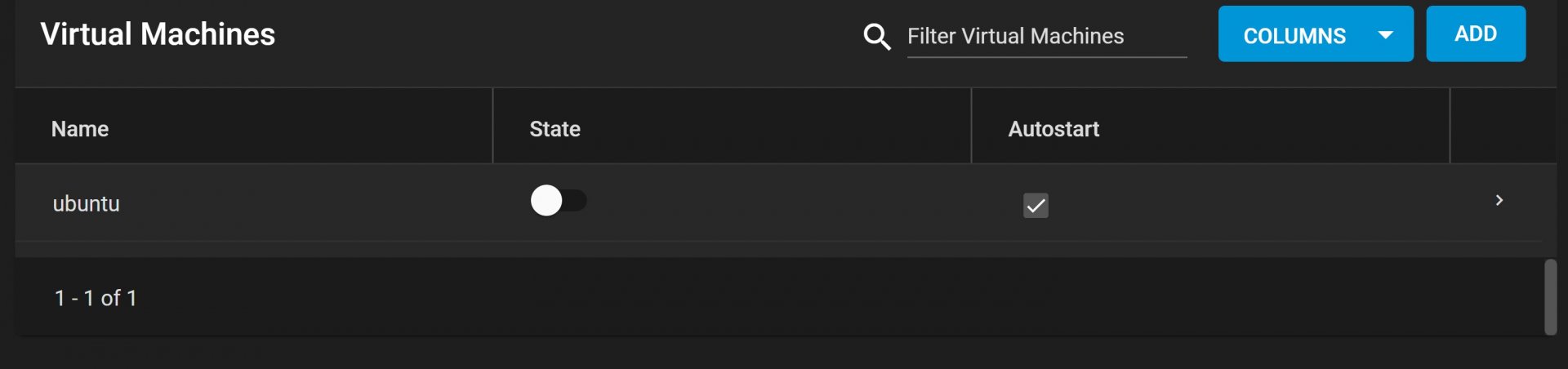

Here are my jails:

I have one VM, but it has been shut down for 10 hours

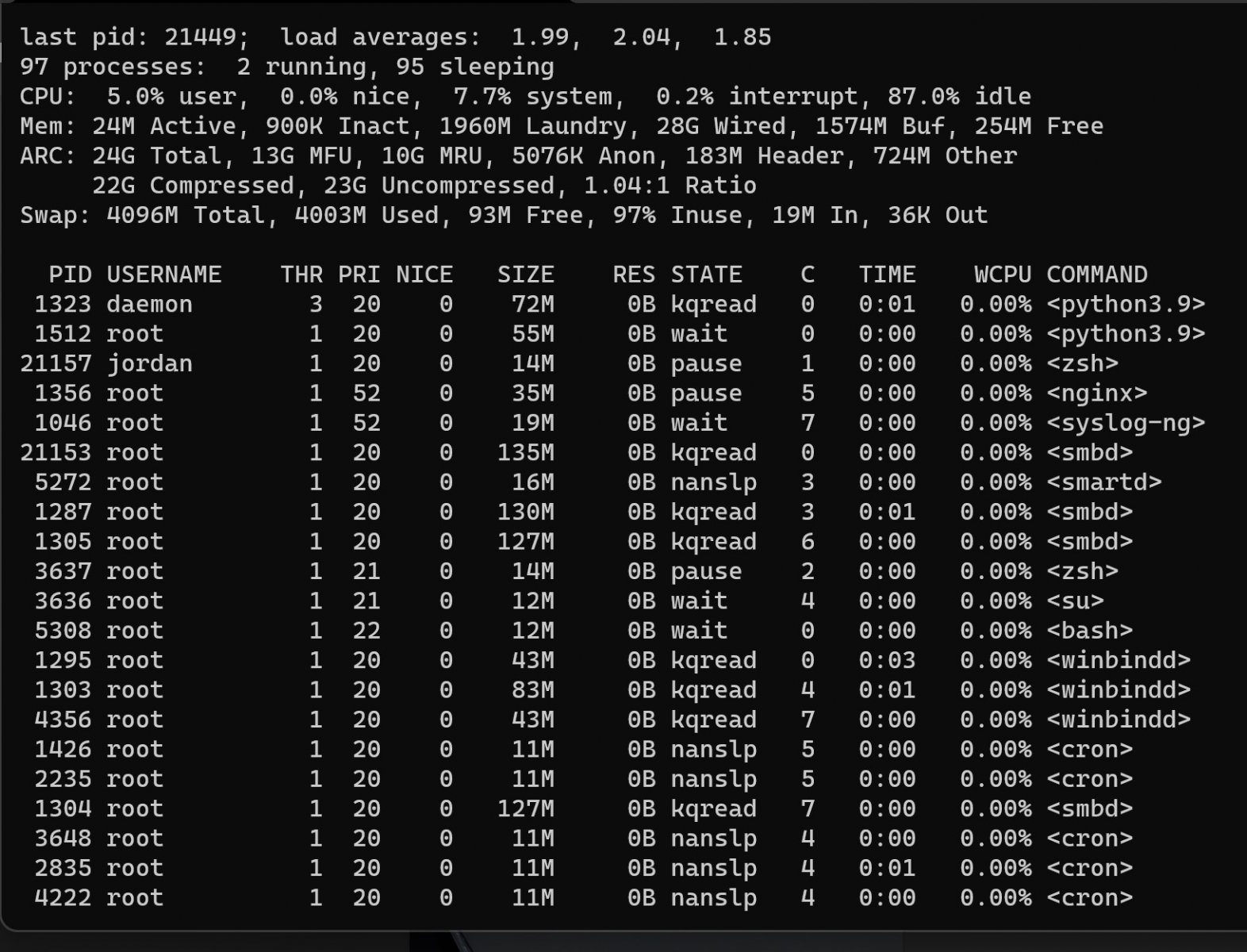

Output from top sorted by swap:

16 X 2TB drives (RAID Z2)

2 X SSD (mirrored for boot)

32 GB RAM

I have a VM running ubuntu desktop with 4GB RAM (it is stopped)

I have 4 jails:

* Plex (very little activity)

* MariaDB (not using it yet, but it is up and running)

* apache (just says hello ;-)

* general purpose that will eventually run scripts via cron

I am getting literally hundreds of these messages per minute.

I added 16 GB RAM a few days ago (total of 32 GB now)

I've been running a backup script (uses rsync) from the console for a couple of days (starting it manually on boots)

These errors started last night after I added an Ubuntu VM. I gave it 4 GB RAM but it has been shut down all night after I first started seeing these errors

I am/have been backing up a large part of the pool to a USB drive. Is this going to make the backup suspect?

Here are my jails:

I have one VM, but it has been shut down for 10 hours

Output from top sorted by swap:

Last edited: