-

Important Announcement for the TrueNAS Community.

The TrueNAS Community has now been moved. This forum has become READ-ONLY for historical purposes. Please feel free to join us on the new TrueNAS Community Forums

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

40Gb Mellanox card setup

- Thread starter SimonFN

- Start date

JustinClift

Patron

- Joined

- Apr 24, 2016

- Messages

- 287

I google all over and see where you can set IB or ETH... but I don't have that. Even after installing 5.50 drivers, I don't get the port protocol tab... driving me crazy...

Had the exact same problem when coming back to these Mellanox adapters after not touching them for ages.

Probably what's happening, is you're looking in the Mellanox adapter entry under the "Network adapters" section of Device Manager. Although there's an entry there for the cards, it's not the right one for changing the port protocol.

Instead, look under the "System devices" section of Device Manager. It turns out these Mellanox cards also put an entry there. You should see it when you look, the name will be sort of obvious. That's the one for changing the port protocol.

And yeah, it's definitely not a "standard" way to do things. Guessing it's because the entry under "Network adapters" gets replaced completely when you change the port protocol, so they needed an entry which would be stable across changes, thus the 2nd one under "System devices".

Last edited:

JustinClift

Patron

- Joined

- Apr 24, 2016

- Messages

- 287

> my current problem is only the top ports are working and I can't get the bottom to work no matter what.

Hmmm. That actually sounds really familiar. I think I've seen the same thing happen before too, but never bothered to investigate it as I only really needed a single port.

It might turn out to be some kind of default limit hard coded into the FreeNAS configuration when it's all compiled. eg "Number of ports on the card" type of thing.

If that's the case, it probably won't be too hard to change. It'd just be a matter of finding the right one + creating a support ticket pointing it out, or (more optimal) just submitting a patch directly to make the change.

Hmmm. That actually sounds really familiar. I think I've seen the same thing happen before too, but never bothered to investigate it as I only really needed a single port.

It might turn out to be some kind of default limit hard coded into the FreeNAS configuration when it's all compiled. eg "Number of ports on the card" type of thing.

If that's the case, it probably won't be too hard to change. It'd just be a matter of finding the right one + creating a support ticket pointing it out, or (more optimal) just submitting a patch directly to make the change.

JustinClift

Patron

- Joined

- Apr 24, 2016

- Messages

- 287

@TeleFragger As a thought, are you decent with compiling stuff?

If you are, then building FreeNAS from source is fairly straight forward. Getting the hang of that lets you get pretty creative when wanting to experiment with drivers.

The source code for FreeNAS 11 is here:

https://github.com/freenas/build

There's a (pretty simple) 5 step walk through of the compile steps in the old source code repo, with screenshots (etc):

https://github.com/freenas/corral-b...ter)-—-Setting-up-a-FreeNAS-build-environment

The instructions in that should still work. They used to be completely cut-n-paste-able, but not sure if anyone's tried them in a while. ;)

If you are, then building FreeNAS from source is fairly straight forward. Getting the hang of that lets you get pretty creative when wanting to experiment with drivers.

The source code for FreeNAS 11 is here:

https://github.com/freenas/build

There's a (pretty simple) 5 step walk through of the compile steps in the old source code repo, with screenshots (etc):

https://github.com/freenas/corral-b...ter)-—-Setting-up-a-FreeNAS-build-environment

The instructions in that should still work. They used to be completely cut-n-paste-able, but not sure if anyone's tried them in a while. ;)

JustinClift

Patron

- Joined

- Apr 24, 2016

- Messages

- 287

> hp rebranded cards ... Mellanox MHGH29-XTC.

...

> would still love to get a 2.9.1200 or 2.10 fw though. tried following many steps and can never find the raw file...

Oh. I used to use those exact cards. May still have the firmware files hanging around in my archives (on the FreeNAS server even). ;)

I'll check sometime today. Hopefully works out ok. :)

Which firmware version are you using atm?

...

> would still love to get a 2.9.1200 or 2.10 fw though. tried following many steps and can never find the raw file...

Oh. I used to use those exact cards. May still have the firmware files hanging around in my archives (on the FreeNAS server even). ;)

I'll check sometime today. Hopefully works out ok. :)

Which firmware version are you using atm?

JustinClift

Patron

- Joined

- Apr 24, 2016

- Messages

- 287

Just read through that HardForum thread. You've definitely been at this for ages, and been learning along the way. Good stuff. :)

One thing that stands out to me, is that in your screenshots over time it seems you're not taking into account the type of physical slot you're putting the cards into. eg x4, x8, x16 PCIe slots.

From memory - as it's been a while for me too - the MHGH28-XTC cards are designed for PCIe 2.0 x16. So, they'll be able to use their full bandwidth (eg 1GB/s) in an x16 slot. If you put them in an x8 slot, they'll technically work... but your maximum bandwidth will be reduced as there's less bandwidth on their motherboard connector for them to transfer stuff through. Not sure if the cards will even work in x4 slots. If they do, it's likely they'll be even more bandwidth constrained.

> Mellanox 2.9.1000

Ahhh, yeah. That's what's on most of mine.

> I was told 2.9.1200 enables something that makes network performance better

Sounds like RDMA mode, which (under windows) allows for much higher performance. The windows SMB drivers are reported to be able to use RDMA mode when talking to other windows boxes. Not something I've played around with, but if you can get it running then why not? :)

I did experiment with getting the 2.9.1200 drivers on some of my cards a while back too, just to see how it goes, and got it working. It required pulling apart the driver packages from HP's downloads of the driver, as they include some extra files, that allows for regenerating firmware with new settings.

Got it working eventually, but it was a real time sink. eg searching the HP(E?) website for Mellanox drivers, downloading them, installing + extracting bits, just to check if the driver package had the right pieces.

Looking through my archives... I didn't keep any of the end results. Dammit. I only have some of the drivers packages I downloaded, and they're not even sorted into good/bad.

That's kinda weird, as I normally archive the end result of things too, just so I don't lose work. :(

One thing that stands out to me, is that in your screenshots over time it seems you're not taking into account the type of physical slot you're putting the cards into. eg x4, x8, x16 PCIe slots.

From memory - as it's been a while for me too - the MHGH28-XTC cards are designed for PCIe 2.0 x16. So, they'll be able to use their full bandwidth (eg 1GB/s) in an x16 slot. If you put them in an x8 slot, they'll technically work... but your maximum bandwidth will be reduced as there's less bandwidth on their motherboard connector for them to transfer stuff through. Not sure if the cards will even work in x4 slots. If they do, it's likely they'll be even more bandwidth constrained.

> Mellanox 2.9.1000

Ahhh, yeah. That's what's on most of mine.

> I was told 2.9.1200 enables something that makes network performance better

Sounds like RDMA mode, which (under windows) allows for much higher performance. The windows SMB drivers are reported to be able to use RDMA mode when talking to other windows boxes. Not something I've played around with, but if you can get it running then why not? :)

I did experiment with getting the 2.9.1200 drivers on some of my cards a while back too, just to see how it goes, and got it working. It required pulling apart the driver packages from HP's downloads of the driver, as they include some extra files, that allows for regenerating firmware with new settings.

Got it working eventually, but it was a real time sink. eg searching the HP(E?) website for Mellanox drivers, downloading them, installing + extracting bits, just to check if the driver package had the right pieces.

Looking through my archives... I didn't keep any of the end results. Dammit. I only have some of the drivers packages I downloaded, and they're not even sorted into good/bad.

That's kinda weird, as I normally archive the end result of things too, just so I don't lose work. :(

JustinClift

Patron

- Joined

- Apr 24, 2016

- Messages

- 287

Btw, with your cards also make sure you're flashing the right "revision" when doing the firmware.

For example, when the MHGH28-XTC cards are manufactured, they seem to have been done in batches (months apart?). With slight tweaks made to the design meanwhile. So, there are (for example) A1 cards, and also (later on) A2 (etc).

Sometimes a firmware is for just a specific version, sometimes it's for a range of revisions. It'll generally say in the firmware download file (eg A2-A3).

I think I used to get the revision number from looking at the back of the cards, as it's normally printed on the board. Or maybe on a sticker on the board. (memory is faint for this)

For example, when the MHGH28-XTC cards are manufactured, they seem to have been done in batches (months apart?). With slight tweaks made to the design meanwhile. So, there are (for example) A1 cards, and also (later on) A2 (etc).

Sometimes a firmware is for just a specific version, sometimes it's for a range of revisions. It'll generally say in the firmware download file (eg A2-A3).

I think I used to get the revision number from looking at the back of the cards, as it's normally printed on the board. Or maybe on a sticker on the board. (memory is faint for this)

JustinClift

Patron

- Joined

- Apr 24, 2016

- Messages

- 287

Good thinking. :)

Unfortunately, I've moved countries so don't have access to any of my old gear.

Using a fairly minimal setup at the moment (no 10Gb anything), as I get stuff sorted out in my new location.

Unfortunately, I've moved countries so don't have access to any of my old gear.

Using a fairly minimal setup at the moment (no 10Gb anything), as I get stuff sorted out in my new location.

JustinClift

Patron

- Joined

- Apr 24, 2016

- Messages

- 287

> can you tell me what files you have so I have an idea what im looking for? I've been just all over Mellanox site but if you fund stuff on HP site that got you to 2.10, id super appreciate it!!!!

Pretty sure you're looking for the HP firmware updates for their rebranded Mellanox cards, from the HP(E?) website.

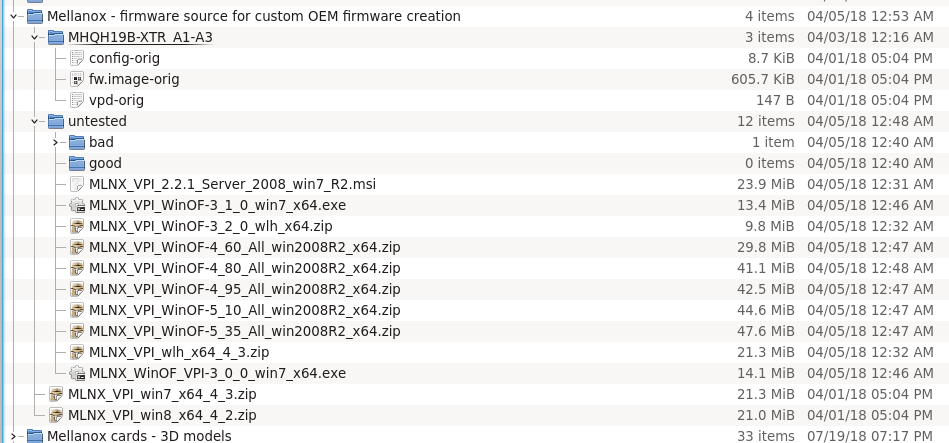

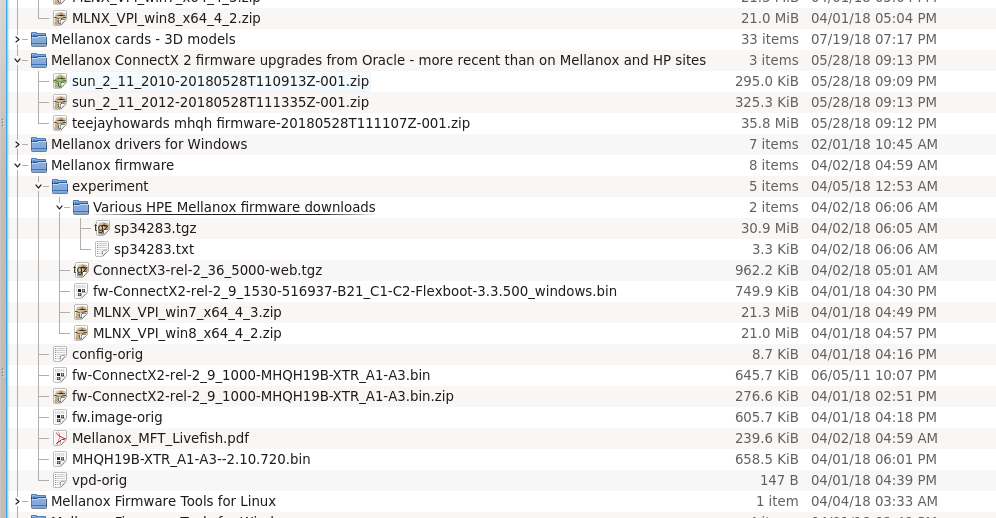

Taking a lazy approach here, these are screenshots of the archive dirs with the downloaded stuff. Very messy (unusually so):

and:

I think the thing I figured out, was that if an archive was over a specific size (maye the ~40MB range?) it would likely contain the right bits. Whereas smaller ones (no idea of size) couldn't.

But the right bits only show up temporarily - in an extracted temp dir created by the HP installer - while the initial screen for the HP driver is shown.

So, it's a case of starting the installer (which extracts the drivers contents to a temp dir in the background), and waiting for the initial screen to appear. Then checking for the new temp dir (not hard, as they get created inside wherever the TEMP environment variable points). If that new temp dir contains some *.mlx files for ConnectX one adapters, you're golden. If not, try the next download.

Pretty sure you're looking for the HP firmware updates for their rebranded Mellanox cards, from the HP(E?) website.

Taking a lazy approach here, these are screenshots of the archive dirs with the downloaded stuff. Very messy (unusually so):

and:

I think the thing I figured out, was that if an archive was over a specific size (maye the ~40MB range?) it would likely contain the right bits. Whereas smaller ones (no idea of size) couldn't.

But the right bits only show up temporarily - in an extracted temp dir created by the HP installer - while the initial screen for the HP driver is shown.

So, it's a case of starting the installer (which extracts the drivers contents to a temp dir in the background), and waiting for the initial screen to appear. Then checking for the new temp dir (not hard, as they get created inside wherever the TEMP environment variable points). If that new temp dir contains some *.mlx files for ConnectX one adapters, you're golden. If not, try the next download.

Last edited:

JustinClift

Patron

- Joined

- Apr 24, 2016

- Messages

- 287

Oh, with your lspci command:

[root@ESXI1:~] lspci | grep Mellanox

0000:03:00.0 Network controller: Mellanox Technologies MT26418 [ConnectX VPI - 10GigE / IB DDR, PCIe 2.0 5GT/s] [vmnic4]

0000:81:00.0 Network controller: Mellanox Technologies MT26418 [ConnectX VPI - 10GigE / IB DDR, PCIe 2.0 5GT/s] [vmnic3]

... add the "-vv" option to it:

lspci -s 0000:03:00.0 -vv

&

lspci -s 0000:81:00.0 -vv

The -s bit is a selector for the card, so you don't need to do the "grep Mellanox" on the end with those. The "-v" bit turns on verbose, so it should give more info. The more v's you give it, the more info shown. eg "-vv" "-vvv". Pretty sure the revision (A1, etc) is one of the fields given. I think it's with "-vv", but it could be "-v" or "-vvv". :)

[root@ESXI1:~] lspci | grep Mellanox

0000:03:00.0 Network controller: Mellanox Technologies MT26418 [ConnectX VPI - 10GigE / IB DDR, PCIe 2.0 5GT/s] [vmnic4]

0000:81:00.0 Network controller: Mellanox Technologies MT26418 [ConnectX VPI - 10GigE / IB DDR, PCIe 2.0 5GT/s] [vmnic3]

... add the "-vv" option to it:

lspci -s 0000:03:00.0 -vv

&

lspci -s 0000:81:00.0 -vv

The -s bit is a selector for the card, so you don't need to do the "grep Mellanox" on the end with those. The "-v" bit turns on verbose, so it should give more info. The more v's you give it, the more info shown. eg "-vv" "-vvv". Pretty sure the revision (A1, etc) is one of the fields given. I think it's with "-vv", but it could be "-v" or "-vvv". :)

JustinClift

Patron

- Joined

- Apr 24, 2016

- Messages

- 287

For finding the driver package on the HP website, their model numbers should do the trick. eg "483514-B21".

JustinClift

Patron

- Joined

- Apr 24, 2016

- Messages

- 287

Ahhh, this should help: https://www.servethehome.com/custom-firmware-mellanox-oem-infiniband-rdma-windows-server-2012/

JustinClift

Patron

- Joined

- Apr 24, 2016

- Messages

- 287

Been there many times. His dl link for his don't work.. tried following to create own and can't find .mlx file and files not in temp

Ahhh, damn.

I was thinking about it later on, and I think the cards I updated were actually later model ones (ConnectX-2 series) than the MHGH28-XTC ones. I used to use MHGH28-XTC ones a lot - very cheap on Ebay for years - but when they mostly disappeared the ConnectX-2 ones started to be more common. Thus the needing the upgrade the firmware for those when bought from Ebay.

With your setup, I'm not sure the RDMA mode enabled by the newer firmware would be useful anyway. It's not something that FreeNAS can make use of. It's purely for connecting to a Windows based storage server. So, I don't think you're actually missing out.

Not sure if that consolation helps though. ;)

JustinClift

Patron

- Joined

- Apr 24, 2016

- Messages

- 287

Hmmm, looks like ESXi provides a slightly different (older?) version of lspci.spci: invalid option -- 's'

In that case, maybe try it as just a straight "lspci -vvv", which will display super extended info for all of your cards.

You can direct that output to a file with the standard unix redirection approach "> somefile.txt". eg:

lspci -vvv > somefile.txt

When that finishes running, somefile.txt should have the info in it. Transfer that off the ESXi box to your desktop (that's up to you), and you should be able to open it up in notepad/wordpad/whatever to find the Mellanox stuff.

JustinClift

Patron

- Joined

- Apr 24, 2016

- Messages

- 287

https://www.dropbox.com/s/snazxrg4g3jwr0g/Firmware.rar?dl=0

Let me know if link works Justin.

Let me know if link works Justin.

JustinClift

Patron

- Joined

- Apr 24, 2016

- Messages

- 287

Important Announcement for the TrueNAS Community.

The TrueNAS Community has now been moved. This forum will now become READ-ONLY for historical purposes. Please feel free to join us on the new TrueNAS Community Forums.Related topics on forums.truenas.com for thread: "40Gb Mellanox card setup"

Similar threads

- Replies

- 16

- Views

- 9K

- Replies

- 32

- Views

- 16K