BFulford

Cadet

- Joined

- Aug 22, 2019

- Messages

- 3

Hello, and thank you for taking your time in reading and contemplating an answer.

I am running FreeNAS-11.3-U3.2 on a system with an i5 and 32G of RAM. I have a pool with 3 zfs1 raids. Two of the raids have four 8TB drives, and the other has four 5TB drives. The system is running on an M.2.

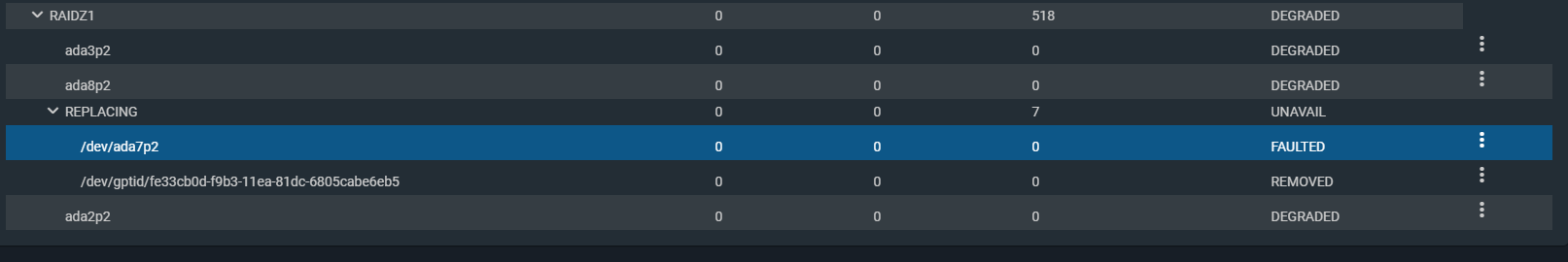

My issue started when a drive faulted. I purchased a new 8TB Barracuda drive. I plugged in the drive and the system registered it and even gave it a name. This is where I saw the issue. The failed drive no longer showed up in disks. Within the pool status I took the faulted drive offline and then told it to replace it with the new disk. Everything seemed like it was going to be fine until the replacement failed. There was no error of a failure it just did not take over, it continues to show that it is replacing in the Status section of the pool. The failed disk and the new disk are now displayed as ada7 in the status menu. When I go to Drives the failed disk is not there, but the new one is still ada7. So I turned the system off and removed the faulted drive. When it came back up it is still showing as degraded and the faulted disk is now showing as removed and the new disk is showing ada7 as faulted within the status menu. When I go to disks now it says ada7 is not in use.

I can not wipe it I get: [EFAULT] Command gpart create -s gpt /dev/ada7 failed (code 1): gpart: arg0 'ada7': Invalid argument.

I can not gpart destroy -F ada7: gpart: arg0 'ada7': Invalid argument.

It almost seems to be confused as to what disk is faulted and what the new one is because the old one died and dropped off while I was trying to replace it.

Any ideas would be most appreciated.

I am running FreeNAS-11.3-U3.2 on a system with an i5 and 32G of RAM. I have a pool with 3 zfs1 raids. Two of the raids have four 8TB drives, and the other has four 5TB drives. The system is running on an M.2.

My issue started when a drive faulted. I purchased a new 8TB Barracuda drive. I plugged in the drive and the system registered it and even gave it a name. This is where I saw the issue. The failed drive no longer showed up in disks. Within the pool status I took the faulted drive offline and then told it to replace it with the new disk. Everything seemed like it was going to be fine until the replacement failed. There was no error of a failure it just did not take over, it continues to show that it is replacing in the Status section of the pool. The failed disk and the new disk are now displayed as ada7 in the status menu. When I go to Drives the failed disk is not there, but the new one is still ada7. So I turned the system off and removed the faulted drive. When it came back up it is still showing as degraded and the faulted disk is now showing as removed and the new disk is showing ada7 as faulted within the status menu. When I go to disks now it says ada7 is not in use.

I can not wipe it I get: [EFAULT] Command gpart create -s gpt /dev/ada7 failed (code 1): gpart: arg0 'ada7': Invalid argument.

I can not gpart destroy -F ada7: gpart: arg0 'ada7': Invalid argument.

It almost seems to be confused as to what disk is faulted and what the new one is because the old one died and dropped off while I was trying to replace it.

Any ideas would be most appreciated.