RegularJoe

Patron

- Joined

- Aug 19, 2013

- Messages

- 330

Hi All,

I might be looking at the wrong data or in the wrong place, I am really just looking to make a baseline to see how this system performs as it ages and to understand when the performance is not good when to look and what to look for.

I am looking at this based on the assumption that longer and slower scrubs mean slower disk results for clients. If I know that 24tb of data can be scrubbed in 6 hours and after xx days my real data is taking 12/24/48 hours I need to look at fragmentation or some other issue.

I looked at another pair of FN nodes that serve NFS 3 files to VMware for VDP backup and they are fragmented so that scrubs take a long time even with very little data.

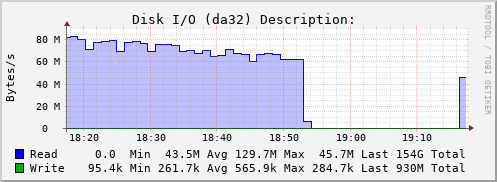

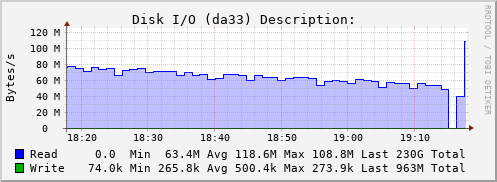

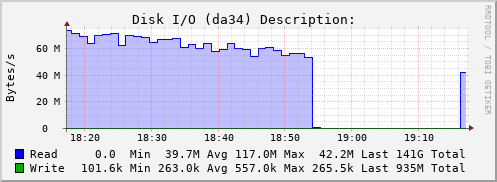

I have a server that I am testing before putting into production. I have filled the disk with 78% of random data and I do a scrub. For now I have tested a 12 vdev stripe of 3-way mirrors as well as a 1 vdev stripe across two HP SAS D2600 shelves with 3tb Seagate SAS drives. The speed looks good for hours and then I get a slowdown all the way to 0 megabytes per minute and then back to 1.45 gig per minute on the zpool. Nothing is using these pools when I am running. I have a Dell R720xd with 192gb of ram and LSI HBA's running firmware 20.

I also observed this issue on FN 11.2-u6

I do not have any other smart tests or scrubs going on at the same time.

I have looked at the legacy interface for the hard drive temperature reporting and all looks consistent for all drives.

I have a short smart test running every day at 7 am

NetData is not running as a service

Compression is off

You can try it for yourself but I think the magic number is to be able to scrub for at least 6 hours or more, the location of testfile.dat should be on a separate zpool that is very fast.

dd if=/dev/random of=/testfile.dat bs=1M count=10240

for i in {1..2500}; do cp -v /testfile.dat "testfile$i.dat"; done

Tonight I am testing both vola and volb scrubbing at the same time to see if the 5 hour mark happens at the same time on both pools.

I might be looking at the wrong data or in the wrong place, I am really just looking to make a baseline to see how this system performs as it ages and to understand when the performance is not good when to look and what to look for.

I am looking at this based on the assumption that longer and slower scrubs mean slower disk results for clients. If I know that 24tb of data can be scrubbed in 6 hours and after xx days my real data is taking 12/24/48 hours I need to look at fragmentation or some other issue.

I looked at another pair of FN nodes that serve NFS 3 files to VMware for VDP backup and they are fragmented so that scrubs take a long time even with very little data.

I have a server that I am testing before putting into production. I have filled the disk with 78% of random data and I do a scrub. For now I have tested a 12 vdev stripe of 3-way mirrors as well as a 1 vdev stripe across two HP SAS D2600 shelves with 3tb Seagate SAS drives. The speed looks good for hours and then I get a slowdown all the way to 0 megabytes per minute and then back to 1.45 gig per minute on the zpool. Nothing is using these pools when I am running. I have a Dell R720xd with 192gb of ram and LSI HBA's running firmware 20.

I also observed this issue on FN 11.2-u6

I do not have any other smart tests or scrubs going on at the same time.

I have looked at the legacy interface for the hard drive temperature reporting and all looks consistent for all drives.

I have a short smart test running every day at 7 am

NetData is not running as a service

Compression is off

You can try it for yourself but I think the magic number is to be able to scrub for at least 6 hours or more, the location of testfile.dat should be on a separate zpool that is very fast.

dd if=/dev/random of=/testfile.dat bs=1M count=10240

for i in {1..2500}; do cp -v /testfile.dat "testfile$i.dat"; done

Tonight I am testing both vola and volb scrubbing at the same time to see if the 5 hour mark happens at the same time on both pools.