Hello everyone,

I am in the progress of migrating over to TrueNAS-13.0-U1.

I have used MergerFS on Ubuntu Server before. This means that I have used JBOD so all disks store different files without redundancy.

My configuration is stock, except changing timezone and adding a user for use with SMB shares & enabling SSH to run Rsync. I am running Rsync so that I can move data from up to 15 disks at the same time, but I am encountering a bottleneck with just a single disk.

I have created a 6 disk RAIDZ2 with 8TB disks with ZSTD-1 compression.

I have added my PCI-E 4.0 NVMe SSD 2 TB as cache.

CPU is Ryzen 5800X.

Here are the commands I run:

At first, the disk is the bottleneck, but slowly my memory fills up and the speed drops to almost a quarter because it transfers and then waits ~2 seconds before continuing. CPU usage is always below 10%, often at just 1-2%.

I have used the same Rsync command when I had Ubuntu Server installed without any bottlenecks. Running multiple Rsync instances is still no problem at all because the disks are always the bottleneck. So I know that the hardware works. I ran Ubuntu Server this morning just fine.

I have tried 3 different disks, same result. I have also tried without the 2 TB SSD cache.

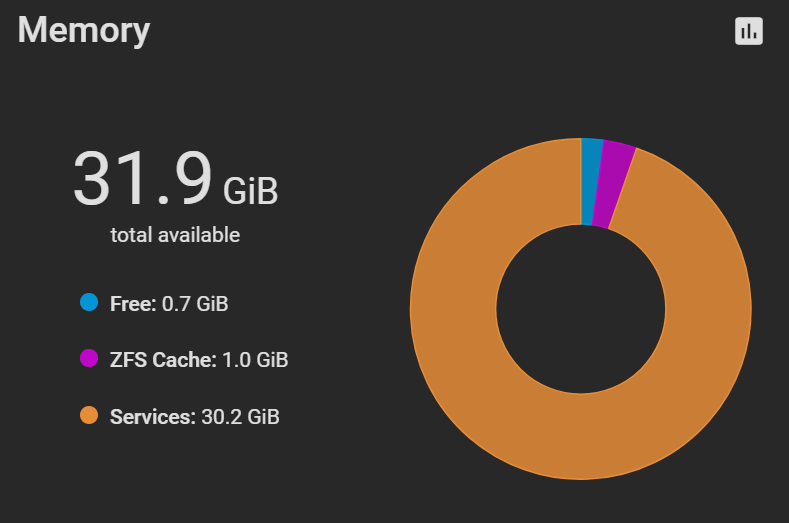

The cache fills up as quickly as I transfer files. Transferring 30 GB equals 30 GiB memory usage on Services.

When I stop the transfers, the Services memory usage stays the same. Rebooting fixes the issue, until I start transferring again.

I am in the progress of migrating over to TrueNAS-13.0-U1.

I have used MergerFS on Ubuntu Server before. This means that I have used JBOD so all disks store different files without redundancy.

My configuration is stock, except changing timezone and adding a user for use with SMB shares & enabling SSH to run Rsync. I am running Rsync so that I can move data from up to 15 disks at the same time, but I am encountering a bottleneck with just a single disk.

I have created a 6 disk RAIDZ2 with 8TB disks with ZSTD-1 compression.

I have added my PCI-E 4.0 NVMe SSD 2 TB as cache.

CPU is Ryzen 5800X.

Here are the commands I run:

Code:

gpart show # to get the disk number and partition kldload fusefs # so I can use ntfs-3g ls /dev | grep da0 # to find the correct partition mkdir /mnt/disk1 # create folder to mount disk ntfs-3g /dev/da0p1 /mnt/disk1/ # mount disk rsync -P -a --remove-source-files /mnt/disk1/ /mnt/storage/storage/ # start transfer

At first, the disk is the bottleneck, but slowly my memory fills up and the speed drops to almost a quarter because it transfers and then waits ~2 seconds before continuing. CPU usage is always below 10%, often at just 1-2%.

I have used the same Rsync command when I had Ubuntu Server installed without any bottlenecks. Running multiple Rsync instances is still no problem at all because the disks are always the bottleneck. So I know that the hardware works. I ran Ubuntu Server this morning just fine.

I have tried 3 different disks, same result. I have also tried without the 2 TB SSD cache.

The cache fills up as quickly as I transfer files. Transferring 30 GB equals 30 GiB memory usage on Services.

When I stop the transfers, the Services memory usage stays the same. Rebooting fixes the issue, until I start transferring again.

Last edited: