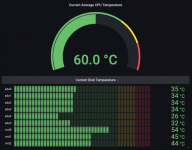

I have a mATX X11SSH-F board (3x pcie slots). A Supermicro AOC-SLG3-2M2 card is in the middle pcie slot (only pcie slot with bifurcation) and right above it is a Dell H310 card. Problem is the H310 card gets HOT. I have 100gb P4801x drives (as slog) in the AOC-SLG3-2M2. I'm seeing hot temps in both the Optane drives and the dell H310. The case is a Fractal 804. I've seen a few 3D printable fan shrouds/ducts but nothing specific for that combination. I'm worried that at idle I'm seeing very high temps --and that's before I even have pools created or a system actually running/functioning.

I've seen @jgreco cringe at the thought of single-point-of-failure cooling failures --and I agree. If these things are HOT now and I don't even have pools created, very bad things could happen if I don't have some reliable redundancy. Two Noctua NF-A4-10 fans fit perfectly in a small rectangle mesh section next to the pcie slots. I'm thinking about a fan duct/shroud situation that pulls air from the inside of the case, flows over both the Dell H310 and the AOC-SLG3-2M2, and then exhausts out the rear of the case. Is two little noctua fans enough to consider it redundant? Should I do something different with a different brand/model fans, or more fans?

I am basically trying to solve for not having a hot/cold isle as well as not having proper air flow (using server hardware in a desktop chassis). Is there anything out there I can 3D print for either of those individual cards... or the combination of those two cards sitting in adjacent pcie slots?

I have messed around with some of the fan scripts (zones for PWM fan speed for cpu temp vs. hdd temp). Is there anything available in non-enterprise edition that would monitor other temps such as the HBA and nvme drives? Is there an APC community/non-enterprise plugin for bbu (usb or network)?

Last thought/comment. I don't really like the idea of this but there is plenty of room inside the chassis: Are there individual cooling shrouds anywhere available to 3d print for either of those cards? I could use pcie ribbon cables to relocate the cards within the chassis so there is enough distance to give them each their own cooling. (this seems like over-complicating and adding in more points of failure)

Thanks.

I've seen @jgreco cringe at the thought of single-point-of-failure cooling failures --and I agree. If these things are HOT now and I don't even have pools created, very bad things could happen if I don't have some reliable redundancy. Two Noctua NF-A4-10 fans fit perfectly in a small rectangle mesh section next to the pcie slots. I'm thinking about a fan duct/shroud situation that pulls air from the inside of the case, flows over both the Dell H310 and the AOC-SLG3-2M2, and then exhausts out the rear of the case. Is two little noctua fans enough to consider it redundant? Should I do something different with a different brand/model fans, or more fans?

I am basically trying to solve for not having a hot/cold isle as well as not having proper air flow (using server hardware in a desktop chassis). Is there anything out there I can 3D print for either of those individual cards... or the combination of those two cards sitting in adjacent pcie slots?

I have messed around with some of the fan scripts (zones for PWM fan speed for cpu temp vs. hdd temp). Is there anything available in non-enterprise edition that would monitor other temps such as the HBA and nvme drives? Is there an APC community/non-enterprise plugin for bbu (usb or network)?

Last thought/comment. I don't really like the idea of this but there is plenty of room inside the chassis: Are there individual cooling shrouds anywhere available to 3d print for either of those cards? I could use pcie ribbon cables to relocate the cards within the chassis so there is enough distance to give them each their own cooling. (this seems like over-complicating and adding in more points of failure)

Thanks.