After upgrading from FreeNAS-9.10.2-U6 (561f0d7a1) to FreeNAS-11.1-U7, I've lost a pool, with 4 disks being marked as UNAVAIL (or more precisely 4 out of 6 disks in a Z1 vdev).

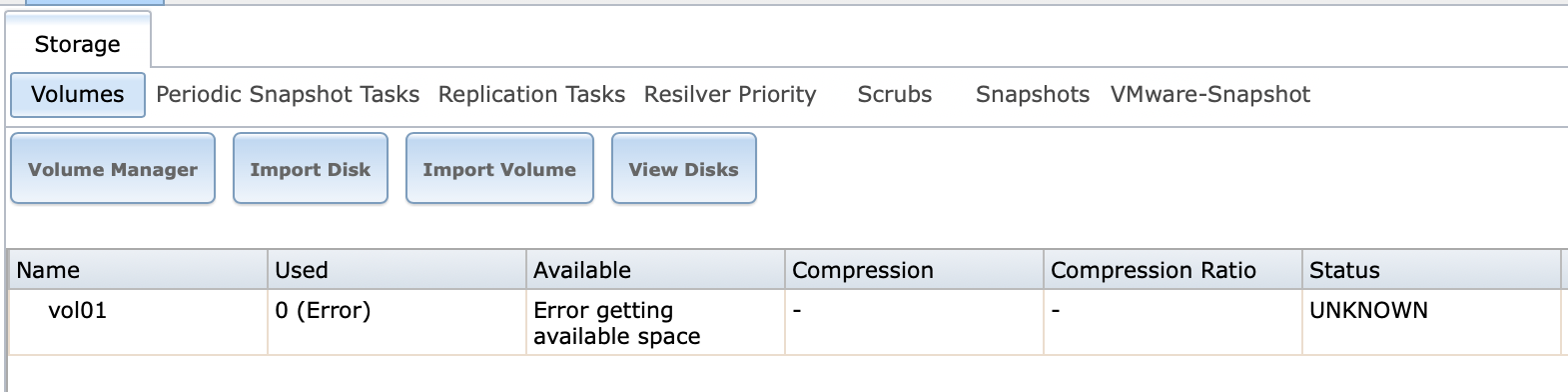

In the GUI, I get this message:

Before (after expanding the pool in January 2019):

After

I would be surprised if the HBA (or channel A of the HBA) or 4 disks failed simultaneously. (Although I know it's a possibility.)

Here's what the disks look like now individually:

Here's the part of the boot log related to HDD:

I haven't issued any zfs commands that write or update config and have only rebooted the system a few times to have a look at the EFI settings and watch the boot.

I am ok in terms of backup -- I snapshot/replicate this server's data to a twin, but I am concerned that an upgrade seemingly tanked the pool.

Questions:

In the GUI, I get this message:

Before (after expanding the pool in January 2019):

Code:

[root@freenas02] /mnt/vol01# zpool list -v

NAME SIZE ALLOC FREE EXPANDSZ FRAG CAP DEDUP HEALTH ALTROOT

freenas-boot 7.44G 5.70G 1.74G - - 76% 1.00x ONLINE -

mirror 7.44G 5.70G 1.74G - - 76%

gptid/bd74e4fb-4470-11e5-bb9f-0014fd1702be - - - - - -

gptid/1d862695-c6e5-11e4-b757-0014fd1702be - - - - - -

vol01 32.5T 15.2T 17.3T - 19% 46% 1.00x ONLINE /mnt

raidz1 16.2T 15.2T 1.04T - 39% 93%

gptid/bd56e573-8b83-11e6-9a21-d05099c10963 - - - - - -

gptid/d65641e2-b536-11e6-9d70-d05099c10963 - - - - - -

gptid/bee5773e-8b83-11e6-9a21-d05099c10963 - - - - - -

gptid/bf9a5e0b-8b83-11e6-9a21-d05099c10963 - - - - - -

gptid/c0540768-8b83-11e6-9a21-d05099c10963 - - - - - -

gptid/c110d0a2-8b83-11e6-9a21-d05099c10963 - - - - - -

raidz1 16.2T 17.0M 16.2T - 0% 0%

da0 - - - - - -

da1 - - - - - -

da2 - - - - - -

da3 - - - - - -

da4 - - - - - -

da5 - - - - - -After

Code:

root@freenas02:~ # zpool import -V

pool: vol01

id: 10112117251247527724

state: UNAVAIL

status: One or more devices are missing from the system.

action: The pool cannot be imported. Attach the missing

devices and try again.

see: http://illumos.org/msg/ZFS-8000-3C

config:

vol01 UNAVAIL insufficient replicas

raidz1-0 ONLINE

gptid/bd56e573-8b83-11e6-9a21-d05099c10963 ONLINE

gptid/d65641e2-b536-11e6-9d70-d05099c10963 ONLINE

gptid/bee5773e-8b83-11e6-9a21-d05099c10963 ONLINE

gptid/bf9a5e0b-8b83-11e6-9a21-d05099c10963 ONLINE

gptid/c0540768-8b83-11e6-9a21-d05099c10963 ONLINE

gptid/c110d0a2-8b83-11e6-9a21-d05099c10963 ONLINE

raidz1-1 UNAVAIL insufficient replicas

da0 ONLINE

da1 ONLINE

16339273880762305465 UNAVAIL cannot open

10436155621961562286 UNAVAIL cannot open

5238054744404005019 UNAVAIL cannot open

3466877252226870208 UNAVAIL cannot openI would be surprised if the HBA (or channel A of the HBA) or 4 disks failed simultaneously. (Although I know it's a possibility.)

Here's what the disks look like now individually:

Code:

root@freenas02:~ # camcontrol devlist -v

scbus0 on mps0 bus 0:

<ATA WDC WD30EFRX-68E 0A80> at scbus0 target 4 lun 0 (pass0,da0)

<ATA WDC WD30EFRX-68E 0A82> at scbus0 target 5 lun 0 (pass1,da1)

<ATA WDC WD30EFRX-68E 0A80> at scbus0 target 6 lun 0 (pass2,da2)

<ATA WDC WD30EFRX-68E 0A80> at scbus0 target 8 lun 0 (pass3,da3)

<ATA WDC WD30EFRX-68E 0A80> at scbus0 target 9 lun 0 (pass4,da4)

<ATA WDC WD30EFRX-68E 0A80> at scbus0 target 10 lun 0 (pass5,da5)

<> at scbus0 target -1 lun ffffffff ()

scbus1 on ahcich0 bus 0:

<WDC WD30EFRX-68AX9N0 80.00A80> at scbus1 target 0 lun 0 (pass6,ada0)

<> at scbus1 target -1 lun ffffffff ()

scbus2 on ahcich1 bus 0:

<WDC WD30EFRX-68AX9N0 80.00A80> at scbus2 target 0 lun 0 (pass7,ada1)

<> at scbus2 target -1 lun ffffffff ()

scbus3 on ahcich2 bus 0:

<WDC WD30EFRX-68AX9N0 80.00A80> at scbus3 target 0 lun 0 (pass8,ada2)

<> at scbus3 target -1 lun ffffffff ()

scbus4 on ahcich3 bus 0:

<WDC WD30EFRX-68EUZN0 82.00A82> at scbus4 target 0 lun 0 (pass9,ada3)

<> at scbus4 target -1 lun ffffffff ()

scbus5 on ahcich4 bus 0:

<WDC WD30EFRX-68EUZN0 82.00A82> at scbus5 target 0 lun 0 (pass10,ada4)

<> at scbus5 target -1 lun ffffffff ()

scbus6 on ahcich5 bus 0:

<WDC WD30EFRX-68EUZN0 82.00A82> at scbus6 target 0 lun 0 (pass11,ada5)

<> at scbus6 target -1 lun ffffffff ()

scbus7 on camsim0 bus 0:

<> at scbus7 target -1 lun ffffffff ()

scbus8 on umass-sim0 bus 0:

<SanDisk Cruzer Fit 1.26> at scbus8 target 0 lun 0 (da6,pass12)

scbus-1 on xpt0 bus 0:

<> at scbus-1 target -1 lun ffffffff (xpt0)

root@freenas02:~ # gpart show

=> 34 5860533101 da2 GPT (2.7T)

34 94 - free - (47K)

128 4194304 1 freebsd-swap (2.0G)

4194432 5856338696 2 freebsd-zfs (2.7T)

5860533128 7 - free - (3.5K)

=> 34 5860533101 da3 GPT (2.7T)

34 94 - free - (47K)

128 4194304 1 freebsd-swap (2.0G)

4194432 5856338696 2 freebsd-zfs (2.7T)

5860533128 7 - free - (3.5K)

=> 34 5860533101 da4 GPT (2.7T)

34 94 - free - (47K)

128 4194304 1 freebsd-swap (2.0G)

4194432 5856338696 2 freebsd-zfs (2.7T)

5860533128 7 - free - (3.5K)

=> 34 5860533101 da5 GPT (2.7T)

34 94 - free - (47K)

128 4194304 1 freebsd-swap (2.0G)

4194432 5856338696 2 freebsd-zfs (2.7T)

5860533128 7 - free - (3.5K)

=> 34 15633341 da6 GPT (7.5G)

34 1024 1 freebsd-boot (512K)

1058 6 - free - (3.0K)

1064 15632304 2 freebsd-zfs (7.5G)

15633368 7 - free - (3.5K)

=> 34 15633341 da7 GPT (7.5G)

34 1024 1 freebsd-boot (512K)

1058 6 - free - (3.0K)

1064 15632304 2 freebsd-zfs (7.5G)

15633368 7 - free - (3.5K)

=> 34 5860533101 ada0 GPT (2.7T)

34 94 - free - (47K)

128 4194304 1 freebsd-swap (2.0G)

4194432 5856338696 2 freebsd-zfs (2.7T)

5860533128 7 - free - (3.5K)

=> 34 5860533101 ada1 GPT (2.7T)

34 94 - free - (47K)

128 4194304 1 freebsd-swap (2.0G)

4194432 5856338696 2 freebsd-zfs (2.7T)

5860533128 7 - free - (3.5K)

=> 34 5860533101 ada2 GPT (2.7T)

34 94 - free - (47K)

128 4194304 1 freebsd-swap (2.0G)

4194432 5856338696 2 freebsd-zfs (2.7T)

5860533128 7 - free - (3.5K)

=> 34 5860533101 ada3 GPT (2.7T)

34 94 - free - (47K)

128 4194304 1 freebsd-swap (2.0G)

4194432 5856338696 2 freebsd-zfs (2.7T)

5860533128 7 - free - (3.5K)

=> 34 5860533101 ada4 GPT (2.7T)

34 94 - free - (47K)

128 4194304 1 freebsd-swap (2.0G)

4194432 5856338696 2 freebsd-zfs (2.7T)

5860533128 7 - free - (3.5K)

=> 34 5860533101 ada5 GPT (2.7T)

34 94 - free - (47K)

128 4194304 1 freebsd-swap (2.0G)

4194432 5856338696 2 freebsd-zfs (2.7T)

5860533128 7 - free - (3.5K)

=> 34 5860533101 da0 GPT (2.7T)

34 94 - free - (47K)

128 4194304 1 freebsd-swap (2.0G)

4194432 5856338696 2 freebsd-zfs (2.7T)

5860533128 7 - free - (3.5K)

=> 34 5860533101 da1 GPT (2.7T)

34 94 - free - (47K)

128 4194304 1 freebsd-swap (2.0G)

4194432 5856338696 2 freebsd-zfs (2.7T)

5860533128 7 - free - (3.5K)

root@freenas02:~ # glabel status

Name Status Components

gptid/b028e667-f483-11e4-a2e2-ac220b4f4343 N/A da2p2

gptid/aec2bf48-f483-11e4-a2e2-ac220b4f4343 N/A da3p2

gptid/afc5b4b8-f483-11e4-a2e2-ac220b4f4343 N/A da4p2

gptid/be17ff68-8b83-11e6-9a21-d05099c10963 N/A da5p2

gptid/bd45140b-4470-11e5-bb9f-0014fd1702be N/A da6p1

gptid/bd74e4fb-4470-11e5-bb9f-0014fd1702be N/A da6p2

gptid/1d7804a0-c6e5-11e4-b757-0014fd1702be N/A da7p1

gptid/1d862695-c6e5-11e4-b757-0014fd1702be N/A da7p2

gptid/bf9a5e0b-8b83-11e6-9a21-d05099c10963 N/A ada0p2

gptid/c0540768-8b83-11e6-9a21-d05099c10963 N/A ada1p2

gptid/bee5773e-8b83-11e6-9a21-d05099c10963 N/A ada2p2

gptid/c110d0a2-8b83-11e6-9a21-d05099c10963 N/A ada3p2

gptid/bd56e573-8b83-11e6-9a21-d05099c10963 N/A ada4p2

gptid/d65641e2-b536-11e6-9d70-d05099c10963 N/A ada5p2

gptid/b07c7234-f483-11e4-a2e2-ac220b4f4343 N/A da0p1

gptid/b088f945-f483-11e4-a2e2-ac220b4f4343 N/A da0p2

gptid/b121c1c9-f483-11e4-a2e2-ac220b4f4343 N/A da1p1

gptid/b12d6a01-f483-11e4-a2e2-ac220b4f4343 N/A da1p2

Here's the part of the boot log related to HDD:

Code:

… Aug 17 13:46:54 freenas02 ahci0: <Intel Lynx Point AHCI SATA controller> port 0xf0b0-0xf0b7,0xf0a0-0xf0a3,0xf090-0xf097,0xf080-0xf083,0xf060-0xf07f mem 0xf7c15000-0xf7c157ff irq 19 at device 31.2 on pci0 Aug 17 13:46:54 freenas02 ahci0: AHCI v1.30 with 6 6Gbps ports, Port Multiplier not supported Aug 17 13:46:54 freenas02 ahcich0: <AHCI channel> at channel 0 on ahci0 Aug 17 13:46:54 freenas02 ahcich1: <AHCI channel> at channel 1 on ahci0 Aug 17 13:46:54 freenas02 ahcich2: <AHCI channel> at channel 2 on ahci0 Aug 17 13:46:54 freenas02 ahcich3: <AHCI channel> at channel 3 on ahci0 Aug 17 13:46:54 freenas02 ahcich4: <AHCI channel> at channel 4 on ahci0 Aug 17 13:46:54 freenas02 ahcich5: <AHCI channel> at channel 5 on ahci0 … Aug 17 13:46:54 freenas02 mps0: SAS Address for SATA device = d2624739dcc8c074 Aug 17 13:46:54 freenas02 mps0: SAS Address for SATA device = d267514bf8ccb972 Aug 17 13:46:54 freenas02 mps0: SAS Address for SATA device = d2624738ddc8c074 Aug 17 13:46:54 freenas02 mps0: SAS Address for SATA device = d2624738d9c3bd76 Aug 17 13:46:54 freenas02 mps0: SAS Address for SATA device = d2624734dfcabe71 Aug 17 13:46:54 freenas02 mps0: SAS Address for SATA device = d2624737dbc8b86d Aug 17 13:46:54 freenas02 mps0: SAS Address from SATA device = d2624739dcc8c074 Aug 17 13:46:54 freenas02 mps0: SAS Address from SATA device = d267514bf8ccb972 Aug 17 13:46:54 freenas02 mps0: SAS Address from SATA device = d2624738ddc8c074 Aug 17 13:46:54 freenas02 mps0: SAS Address from SATA device = d2624738d9c3bd76 Aug 17 13:46:54 freenas02 mps0: SAS Address from SATA device = d2624734dfcabe71 Aug 17 13:46:54 freenas02 mps0: SAS Address from SATA device = d2624737dbc8b86d … Aug 17 13:46:54 freenas02 ada0 at ahcich0 bus 0 scbus1 target 0 lun 0 Aug 17 13:46:54 freenas02 da0 at mps0 bus 0 scbus0 target 4 lun 0 Aug 17 13:46:54 freenas02 ada0: <WDC WD30EFRX-68AX9N0 80.00A80> ACS-2 ATA SATA 3.x device Aug 17 13:46:54 freenas02 ada0: Serial Number WD-WCC1T0454382 Aug 17 13:46:54 freenas02 da0: <ATA WDC WD30EFRX-68E 0A80> Fixed Direct Access SPC-4 SCSI device Aug 17 13:46:54 freenas02 ada0: 600.000MB/s transfersda0: Serial Number WD-WMC4N0755697 Aug 17 13:46:54 freenas02 da0: 600.000MB/s transfers Aug 17 13:46:54 freenas02 da0: Command Queueing enabled Aug 17 13:46:54 freenas02 da0: 2861588MB (5860533168 512 byte sectors) Aug 17 13:46:54 freenas02 da0: quirks=0x8<4K> Aug 17 13:46:54 freenas02 da1 at mps0 bus 0 scbus0 target 5 lun 0 Aug 17 13:46:54 freenas02 da1: <ATA WDC WD30EFRX-68E 0A82> Fixed Direct Access SPC-4 SCSI device Aug 17 13:46:54 freenas02 da1: Serial Number WD-WCC4N5AHPD25 Aug 17 13:46:54 freenas02 da1: 600.000MB/s transfers Aug 17 13:46:54 freenas02 da1: Command Queueing enabled Aug 17 13:46:54 freenas02 da1: 2861588MB (5860533168 512 byte sectors) Aug 17 13:46:54 freenas02 da1: quirks=0x8<4K> Aug 17 13:46:54 freenas02 da2 at mps0 bus 0 scbus0 target 6 lun 0 Aug 17 13:46:54 freenas02 (SATA 3.x, UDMA6, PIO 8192bytes) Aug 17 13:46:54 freenas02 ada0: Command Queueing enabled Aug 17 13:46:54 freenas02 da2: <ATA WDC WD30EFRX-68E 0A80> Fixed Direct Access SPC-4 SCSI device Aug 17 13:46:54 freenas02 ada0: 2861588MB (5860533168 512 byte sectors) Aug 17 13:46:54 freenas02 ada0: quirks=0x1<4K> Aug 17 13:46:54 freenas02 ada1 at ahcich1 bus 0 scbus2 target 0 lun 0 Aug 17 13:46:54 freenas02 da2: Serial Number WD-WMC4N0764697 Aug 17 13:46:54 freenas02 da2: 600.000MB/s transfersada1: <WDC WD30EFRX-68AX9N0 80.00A80> ACS-2 ATA SATA 3.x device Aug 17 13:46:54 freenas02 ada1: Serial Number WD-WCC1T0418229 Aug 17 13:46:54 freenas02 ada1: 600.000MB/s transfers Aug 17 13:46:54 freenas02 da2: Command Queueing enabled Aug 17 13:46:54 freenas02 da2: 2861588MB (5860533168 512 byte sectors) Aug 17 13:46:54 freenas02 (SATA 3.x, da2: quirks=0x8<4K> Aug 17 13:46:54 freenas02 da3 at mps0 bus 0 scbus0 target 8 lun 0 Aug 17 13:46:54 freenas02 da3: <ATA WDC WD30EFRX-68E 0A80> Fixed Direct Access SPC-4 SCSI device Aug 17 13:46:54 freenas02 da3: Serial Number WD-WMC4N0717874 Aug 17 13:46:54 freenas02 da3: 600.000MB/s transfers Aug 17 13:46:54 freenas02 da3: Command Queueing enabled Aug 17 13:46:54 freenas02 UDMA6, PIO 8192bytes) Aug 17 13:46:54 freenas02 ada1: Command Queueing enabled Aug 17 13:46:54 freenas02 ada1: 2861588MB (5860533168 512 byte sectors) Aug 17 13:46:54 freenas02 da3: 2861588MB (5860533168 512 byte sectors) Aug 17 13:46:54 freenas02 da3: quirks=0x8<4K> Aug 17 13:46:54 freenas02 da4 at mps0 bus 0 scbus0 target 9 lun 0 Aug 17 13:46:54 freenas02 da4: <ATA WDC WD30EFRX-68E 0A80> Fixed Direct Access SPC-4 SCSI device Aug 17 13:46:54 freenas02 da4: Serial Number WD-WMC4N0751169 Aug 17 13:46:54 freenas02 da4: 600.000MB/s transfers Aug 17 13:46:54 freenas02 da4: Command Queueing enabled Aug 17 13:46:54 freenas02 da4: 2861588MB (5860533168 512 byte sectors) Aug 17 13:46:54 freenas02 da4: quirks=0x8<4K> Aug 17 13:46:54 freenas02 da5 at mps0 bus 0 scbus0 target 10 lun 0 Aug 17 13:46:54 freenas02 da5: <ATA WDC WD30EFRX-68E 0A80> Fixed Direct Access SPC-4 SCSI device Aug 17 13:46:54 freenas02 ada1: quirks=0x1<4K> Aug 17 13:46:54 freenas02 ada2 at ahcich2 bus 0 scbus3 target 0 lun 0 Aug 17 13:46:54 freenas02 da5: Serial Number WD-WMC4N0743610 Aug 17 13:46:54 freenas02 ada2: <WDC WD30EFRX-68AX9N0 80.00A80> ACS-2 ATA SATA 3.x device Aug 17 13:46:54 freenas02 da5: 600.000MB/s transfers Aug 17 13:46:54 freenas02 da5: Command Queueing enabled Aug 17 13:46:54 freenas02 ada2: Serial Number WD-WCC1T0298346 Aug 17 13:46:54 freenas02 ada2: 600.000MB/s transfers (SATA 3.x, UDMA6, PIO 8192bytes) Aug 17 13:46:54 freenas02 ada2: Command Queueing enabled Aug 17 13:46:54 freenas02 ada2: 2861588MB (5860533168 512 byte sectors) Aug 17 13:46:54 freenas02 da5: 2861588MB (5860533168 512 byte sectors) Aug 17 13:46:54 freenas02 da5: quirks=0x8<4K> Aug 17 13:46:54 freenas02 ada2: quirks=0x1<4K> Aug 17 13:46:54 freenas02 ada3 at ahcich3 bus 0 scbus4 target 0 lun 0 Aug 17 13:46:54 freenas02 ada3: <WDC WD30EFRX-68EUZN0 82.00A82> ACS-2 ATA SATA 3.x device Aug 17 13:46:54 freenas02 ada3: Serial Number WD-WCC4N2TYF2UN Aug 17 13:46:54 freenas02 ada3: 600.000MB/s transfers (SATA 3.x, UDMA6, PIO 8192bytes) Aug 17 13:46:54 freenas02 ada3: Command Queueing enabled Aug 17 13:46:54 freenas02 ada3: 2861588MB (5860533168 512 byte sectors) Aug 17 13:46:54 freenas02 ada3: quirks=0x1<4K> Aug 17 13:46:54 freenas02 ada4 at ahcich4 bus 0 scbus5 target 0 lun 0 Aug 17 13:46:54 freenas02 ada4: <WDC WD30EFRX-68EUZN0 82.00A82> ACS-2 ATA SATA 3.x device Aug 17 13:46:54 freenas02 ada4: Serial Number WD-WCC4N7FATRR1 Aug 17 13:46:54 freenas02 ada4: 600.000MB/s transfers (SATA 3.x, UDMA6, PIO 8192bytes) Aug 17 13:46:54 freenas02 ada4: Command Queueing enabled Aug 17 13:46:54 freenas02 ada4: 2861588MB (5860533168 512 byte sectors) Aug 17 13:46:54 freenas02 ada4: quirks=0x1<4K> Aug 17 13:46:54 freenas02 ada5 at ahcich5 bus 0 scbus6 target 0 lun 0 Aug 17 13:46:54 freenas02 ada5: <WDC WD30EFRX-68EUZN0 82.00A82> ACS-2 ATA SATA 3.x device Aug 17 13:46:54 freenas02 ada5: Serial Number WD-WCC4N4FCUHHY Aug 17 13:46:54 freenas02 ada5: 600.000MB/s transfers (SATA 3.x, UDMA6, PIO 8192bytes) Aug 17 13:46:54 freenas02 ada5: Command Queueing enabled Aug 17 13:46:54 freenas02 ada5: 2861588MB (5860533168 512 byte sectors) Aug 17 13:46:54 freenas02 ada5: quirks=0x1<4K> Aug 17 13:46:54 freenas02 Trying to mount root from zfs:freenas-boot/ROOT/11.1-U7 []... … Aug 17 14:49:46 freenas02 ZFS: vdev state changed, pool_guid=10112117251247527724 vdev_guid=3671607037181642970 Aug 17 14:49:46 freenas02 ZFS: vdev state changed, pool_guid=10112117251247527724 vdev_guid=9652509477325859936 Aug 17 14:49:47 freenas02 ZFS: vdev state changed, pool_guid=10112117251247527724 vdev_guid=14436258189133544651 Aug 17 14:49:47 freenas02 ZFS: vdev state changed, pool_guid=10112117251247527724 vdev_guid=13407339990873068916 Aug 17 14:49:47 freenas02 ZFS: vdev state changed, pool_guid=10112117251247527724 vdev_guid=11357776941345595226 Aug 17 14:49:47 freenas02 ZFS: vdev state changed, pool_guid=10112117251247527724 vdev_guid=17955406522460418432 Aug 17 14:49:47 freenas02 ZFS: vdev state changed, pool_guid=10112117251247527724 vdev_guid=3535278873160926383 Aug 17 14:49:47 freenas02 ZFS: vdev state changed, pool_guid=10112117251247527724 vdev_guid=15861408793602997199 Aug 17 14:49:48 freenas02 ZFS: vdev state changed, pool_guid=10112117251247527724 vdev_guid=16339273880762305465 Aug 17 14:49:48 freenas02 ZFS: vdev state changed, pool_guid=10112117251247527724 vdev_guid=10436155621961562286 Aug 17 14:49:48 freenas02 ZFS: vdev state changed, pool_guid=10112117251247527724 vdev_guid=5238054744404005019 Aug 17 14:49:48 freenas02 ZFS: vdev state changed, pool_guid=10112117251247527724 vdev_guid=3466877252226870208 … Aug 17 14:51:18 freenas02 ZFS: vdev state changed, pool_guid=10112117251247527724 vdev_guid=3671607037181642970 Aug 17 14:51:18 freenas02 ZFS: vdev state changed, pool_guid=10112117251247527724 vdev_guid=9652509477325859936 Aug 17 14:51:18 freenas02 ZFS: vdev state changed, pool_guid=10112117251247527724 vdev_guid=14436258189133544651 Aug 17 14:51:18 freenas02 ZFS: vdev state changed, pool_guid=10112117251247527724 vdev_guid=13407339990873068916 Aug 17 14:51:18 freenas02 ZFS: vdev state changed, pool_guid=10112117251247527724 vdev_guid=11357776941345595226 Aug 17 14:51:18 freenas02 ZFS: vdev state changed, pool_guid=10112117251247527724 vdev_guid=17955406522460418432 Aug 17 14:51:18 freenas02 ZFS: vdev state changed, pool_guid=10112117251247527724 vdev_guid=3535278873160926383 Aug 17 14:51:18 freenas02 ZFS: vdev state changed, pool_guid=10112117251247527724 vdev_guid=15861408793602997199 Aug 17 14:51:18 freenas02 ZFS: vdev state changed, pool_guid=10112117251247527724 vdev_guid=16339273880762305465 Aug 17 14:51:18 freenas02 ZFS: vdev state changed, pool_guid=10112117251247527724 vdev_guid=10436155621961562286 Aug 17 14:51:18 freenas02 ZFS: vdev state changed, pool_guid=10112117251247527724 vdev_guid=5238054744404005019 Aug 17 14:51:18 freenas02 ZFS: vdev state changed, pool_guid=10112117251247527724 vdev_guid=3466877252226870208 … Aug 17 14:53:45 freenas02 ZFS: vdev state changed, pool_guid=3018436033628833179 vdev_guid=13331606250362451550 Aug 17 14:53:46 freenas02 ZFS: vdev state changed, pool_guid=3018436033628833179 vdev_guid=8155704046927289062 …

I haven't issued any zfs commands that write or update config and have only rebooted the system a few times to have a look at the EFI settings and watch the boot.

I am ok in terms of backup -- I snapshot/replicate this server's data to a twin, but I am concerned that an upgrade seemingly tanked the pool.

Questions:

- Can I recover these 4 disks and import the pool? (tweaking a few settings seems faster than destroying the remnants of the pool and then zfs sending 15 TB+ over the wire from the backup server) If so, what are the next steps?

- Are there any known issues with the 11.x upgrades and a pool that has been extended this way?

- Should I expect this behaviour when I upgrade the backup server as well?