- Joined

- Jan 14, 2023

- Messages

- 623

Hello all,

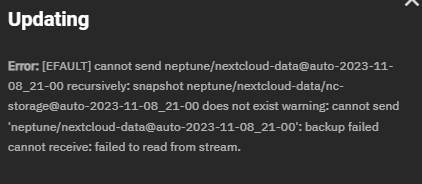

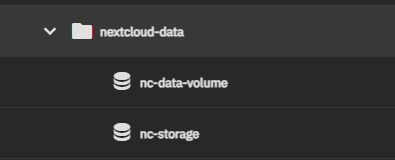

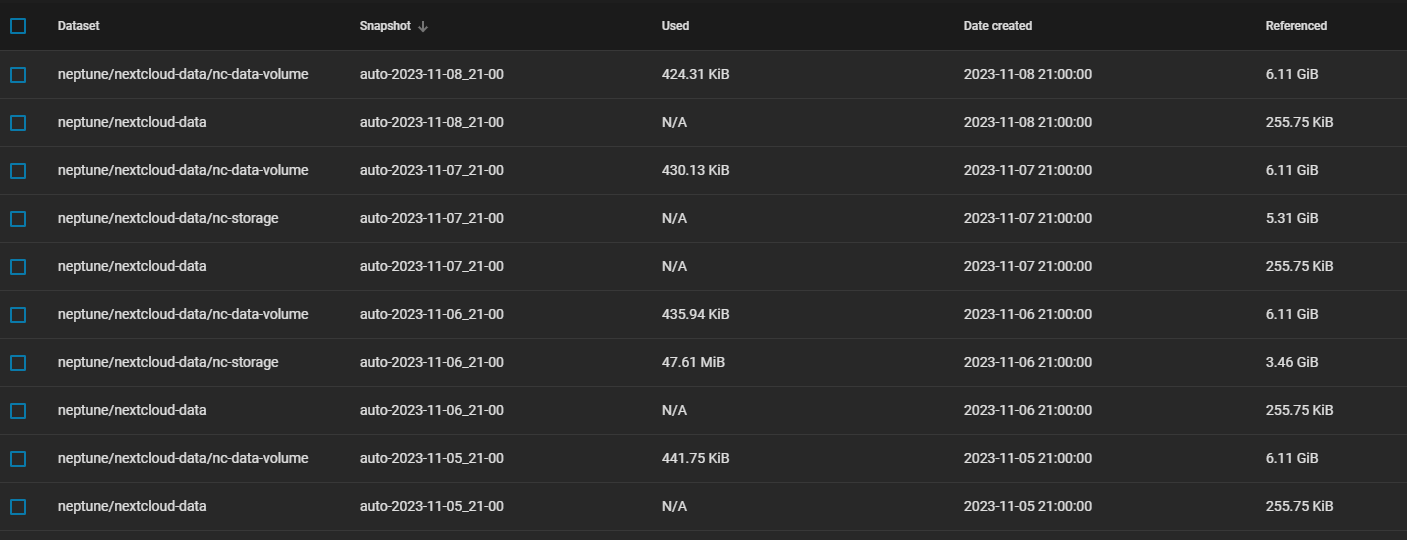

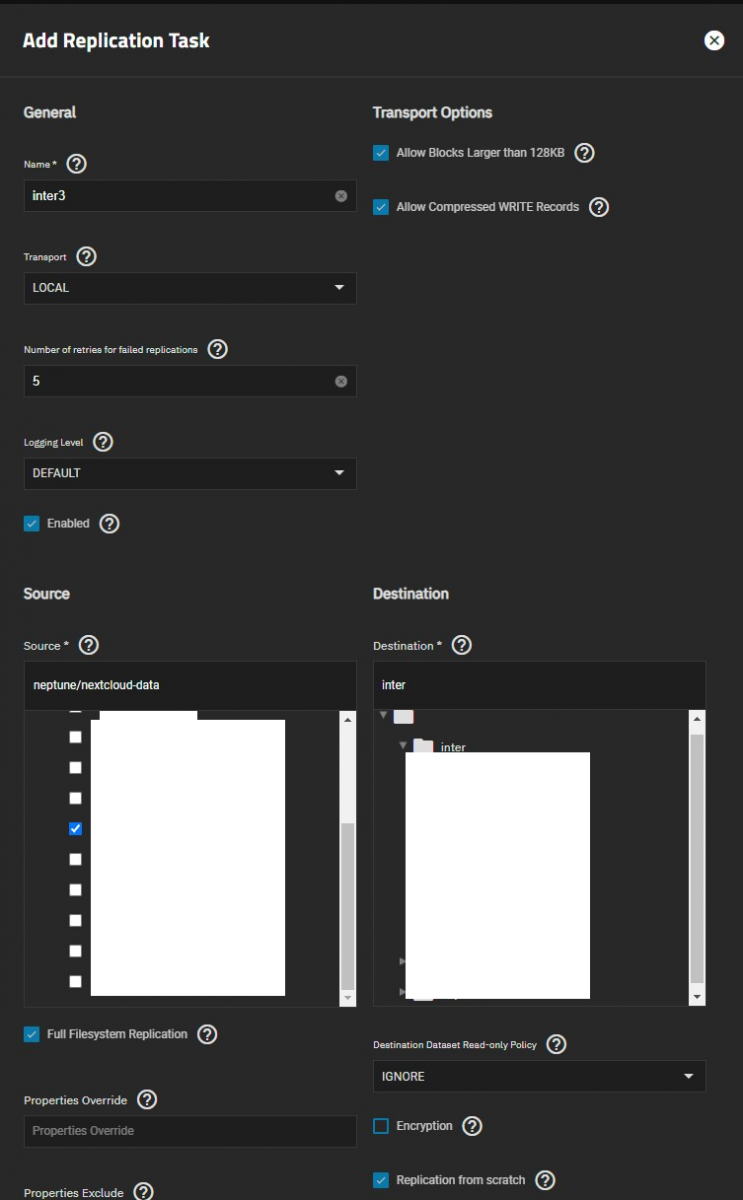

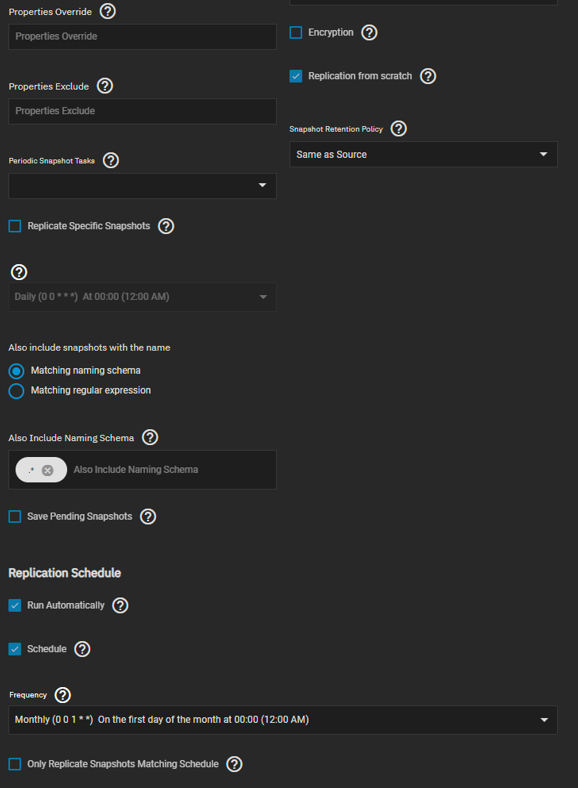

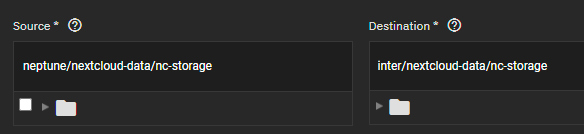

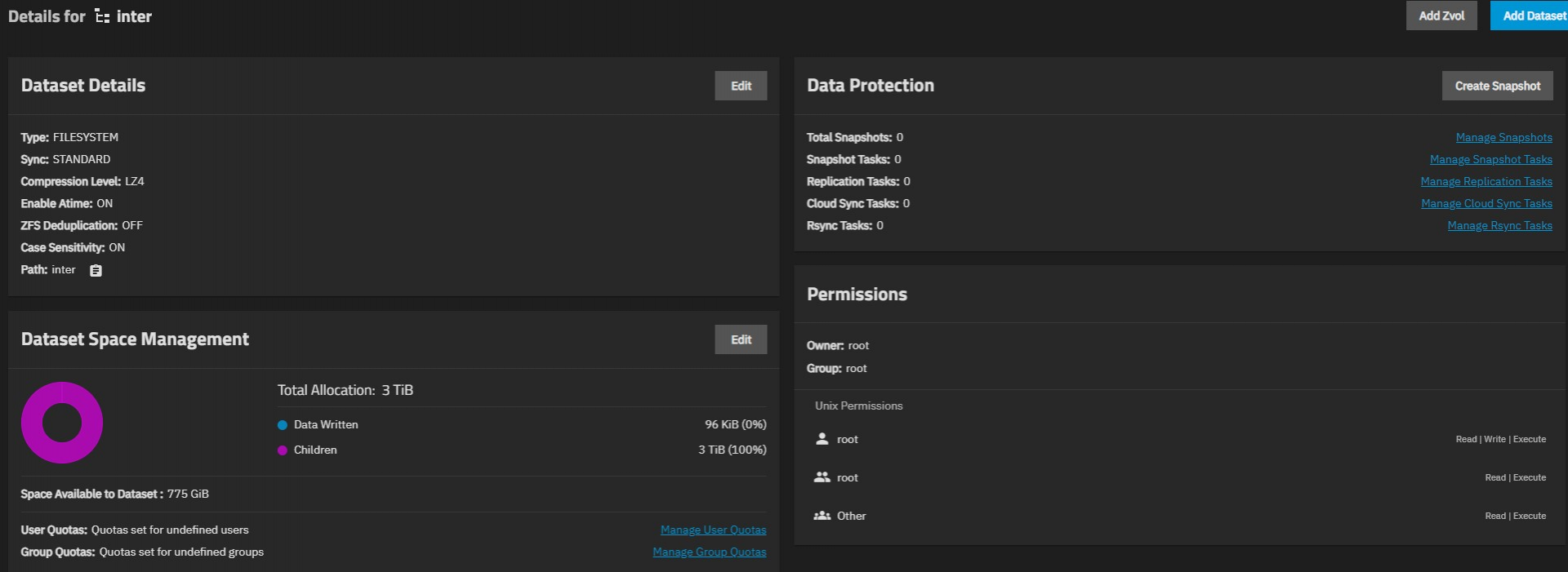

I wanted to backup my pool in order to prepare for migration from raidz2 to mirrored vdevs. Most of the datasets replicated fine however for example my nextcloud storage exited with errors:

Problem 1

I have "allow empty snapshots" disabled. Is the replication task confused becaused not all zvols have the same number of snapshots?

The whole dataset was selected for backup as full filesystem replication.

When I created separate tasks to replicate from zvol to zvol directly it works. I doubt there's data under the dataset anyway besides the zvols, so no harm would be done.

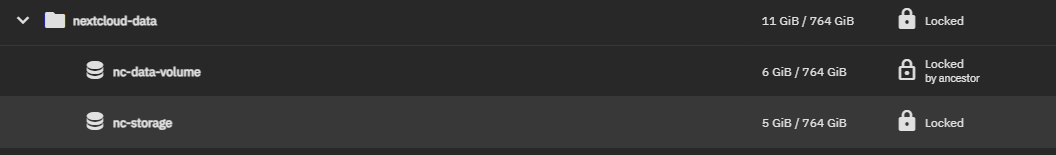

Encryption roots didn't seem to work 100% but that's not too bad I guess:

where it should be unlocked by ancestor for both.

I received the same error for another dataset that only contains zvols (in this case replicas of my VM pool).

Problem 2

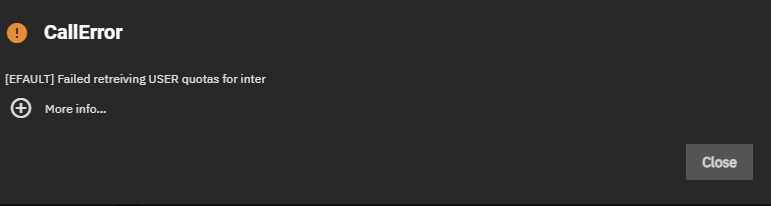

I think the issue occured after I deleted the nextcloud dataset in order to retry the replication after I removed it from the task (there were other datasets not yet copied and I wanted everything to finish while I'm at work). Almost everytime I go the datasets tab now I'm greeted by:

I have not yet rebooted the server. I rebooted and the error seems to have disappeared.

Is this an issue? I will destroy the pool anyway after I recreated my main storage pool and replicated all datasets from inter back to the main pool.

As always, any input is greatly appreciated! I want to make absolutely sure I have a working copy before I destroy my main pool.

I wanted to backup my pool in order to prepare for migration from raidz2 to mirrored vdevs. Most of the datasets replicated fine however for example my nextcloud storage exited with errors:

TrueNAS-SCALE-22.12.4.2

Supermicro X10SRi-F, Xeon 2640v4, 128 GB ECC RAM, Seasonic PX-750 in Fractal Design R5

Data pool: 4*4TB WD RED PLUS

VM pool: 2*500GB SSD (Samsung Evo 850 / Crucial mx500)

Problem 1

I have "allow empty snapshots" disabled. Is the replication task confused becaused not all zvols have the same number of snapshots?

The whole dataset was selected for backup as full filesystem replication.

When I created separate tasks to replicate from zvol to zvol directly it works. I doubt there's data under the dataset anyway besides the zvols, so no harm would be done.

Encryption roots didn't seem to work 100% but that's not too bad I guess:

where it should be unlocked by ancestor for both.

I received the same error for another dataset that only contains zvols (in this case replicas of my VM pool).

Problem 2

I think the issue occured after I deleted the nextcloud dataset in order to retry the replication after I removed it from the task (there were other datasets not yet copied and I wanted everything to finish while I'm at work). Almost everytime I go the datasets tab now I'm greeted by:

Code:

Error: concurrent.futures.process._RemoteTraceback:

"""

Traceback (most recent call last):

File "/usr/lib/python3/dist-packages/middlewared/plugins/zfs.py", line 760, in get_quota

quotas = resource.userspace(quota_props)

File "libzfs.pyx", line 465, in libzfs.ZFS.__exit__

File "/usr/lib/python3/dist-packages/middlewared/plugins/zfs.py", line 760, in get_quota

quotas = resource.userspace(quota_props)

File "libzfs.pyx", line 3532, in libzfs.ZFSResource.userspace

libzfs.ZFSException: cannot get used/quota for inter: dataset is busy

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "/usr/lib/python3.9/concurrent/futures/process.py", line 243, in _process_worker

r = call_item.fn(*call_item.args, **call_item.kwargs)

File "/usr/lib/python3/dist-packages/middlewared/worker.py", line 115, in main_worker

res = MIDDLEWARE._run(*call_args)

File "/usr/lib/python3/dist-packages/middlewared/worker.py", line 46, in _run

return self._call(name, serviceobj, methodobj, args, job=job)

File "/usr/lib/python3/dist-packages/middlewared/worker.py", line 40, in _call

return methodobj(*params)

File "/usr/lib/python3/dist-packages/middlewared/worker.py", line 40, in _call

return methodobj(*params)

File "/usr/lib/python3/dist-packages/middlewared/plugins/zfs.py", line 762, in get_quota

raise CallError(f'Failed retreiving {quota_type} quotas for {ds}')

middlewared.service_exception.CallError: [EFAULT] Failed retreiving USER quotas for inter

"""

The above exception was the direct cause of the following exception:

Traceback (most recent call last):

File "/usr/lib/python3/dist-packages/middlewared/main.py", line 184, in call_method

result = await self.middleware._call(message['method'], serviceobj, methodobj, params, app=self)

File "/usr/lib/python3/dist-packages/middlewared/main.py", line 1317, in _call

return await methodobj(*prepared_call.args)

File "/usr/lib/python3/dist-packages/middlewared/schema.py", line 1379, in nf

return await func(*args, **kwargs)

File "/usr/lib/python3/dist-packages/middlewared/plugins/pool.py", line 4116, in get_quota

quota_list = await self.middleware.call(

File "/usr/lib/python3/dist-packages/middlewared/main.py", line 1368, in call

return await self._call(

File "/usr/lib/python3/dist-packages/middlewared/main.py", line 1325, in _call

return await self._call_worker(name, *prepared_call.args)

File "/usr/lib/python3/dist-packages/middlewared/main.py", line 1331, in _call_worker

return await self.run_in_proc(main_worker, name, args, job)

File "/usr/lib/python3/dist-packages/middlewared/main.py", line 1246, in run_in_proc

return await self.run_in_executor(self.__procpool, method, *args, **kwargs)

File "/usr/lib/python3/dist-packages/middlewared/main.py", line 1231, in run_in_executor

return await loop.run_in_executor(pool, functools.partial(method, *args, **kwargs))

middlewared.service_exception.CallError: [EFAULT] Failed retreiving USER quotas for inter

Is this an issue? I will destroy the pool anyway after I recreated my main storage pool and replicated all datasets from inter back to the main pool.

As always, any input is greatly appreciated! I want to make absolutely sure I have a working copy before I destroy my main pool.

Last edited: