aufalien

Patron

- Joined

- Jul 25, 2013

- Messages

- 374

Hi,

I've a few tools running on my ZFS box, commands and flags;

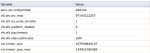

arcstat.py 1

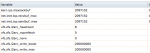

zilstat -l 5

zpool iostat 1

zfs-mon (run within a jailed FreeBSD instance)

In addition to generally getting a good feel for my new server as I had to put it in production before proper testing and understanding ZFS, I'm encountering 2 issues;

1) Load avg seems spike at 40 ish daily and hovers between 3 and 16 most of the time. During light access is around 0-1 of course.

2) Enabling jumbo frames spike the laod to 60ish and while it doesn't lock up, client access is severely interrupted. So I've kept it at standard frame for now but will need jumbos this year due this insanity of ppl thinking they need a 4K TV but I digress.

The arcstat command is showing an arc size of about 9-11GB on a consistent basis.

I've a temp read cache appliance fronting it to offload reads which will go away soon leaving my FreeNAS box totally exposed.

My env;

FreeNAS 9.1.1 (planning to up to the latest when time permits)

Duel Intel e5 2430 procs, 12 cores total

128GB mem

39 3TB Seagate ES.2 SATA drives

Single 10GB but soon to be trunked x2 10GB

1TB of SSD L2Arc

2xstripped SSD ZILs

LZ4 compression

About 350 render nodes hitting it during heavy 2D/3D renders (all day long now) with about 100 nodes of mixed Linux/OS stations, few Windows and all NFS.

2D renders are constantly doing IO during a frame render were a 3D render mostly accesses once during a frame, it may fetch more data during that frame like textures etc... but mostly just a single server hit per frame, well actually 2, read then at the end a write.

I was thinking to bump up my mem to 256GB but if arcstat is only showing 11G max, it doesn't sound like money well spent.

My question is not whats going on but rather how I can see better whats going on? I'm having a hard time interpreting numbers resulting from the 4 tools noted above.

I can swap out procs or mem but not both.

I can provide a few pics of whats going on but didn't want to vomit all this info at once. I'll only barf it all up upon any interest in this thread :)

I've a few tools running on my ZFS box, commands and flags;

arcstat.py 1

zilstat -l 5

zpool iostat 1

zfs-mon (run within a jailed FreeBSD instance)

In addition to generally getting a good feel for my new server as I had to put it in production before proper testing and understanding ZFS, I'm encountering 2 issues;

1) Load avg seems spike at 40 ish daily and hovers between 3 and 16 most of the time. During light access is around 0-1 of course.

2) Enabling jumbo frames spike the laod to 60ish and while it doesn't lock up, client access is severely interrupted. So I've kept it at standard frame for now but will need jumbos this year due this insanity of ppl thinking they need a 4K TV but I digress.

The arcstat command is showing an arc size of about 9-11GB on a consistent basis.

I've a temp read cache appliance fronting it to offload reads which will go away soon leaving my FreeNAS box totally exposed.

My env;

FreeNAS 9.1.1 (planning to up to the latest when time permits)

Duel Intel e5 2430 procs, 12 cores total

128GB mem

39 3TB Seagate ES.2 SATA drives

Single 10GB but soon to be trunked x2 10GB

1TB of SSD L2Arc

2xstripped SSD ZILs

LZ4 compression

About 350 render nodes hitting it during heavy 2D/3D renders (all day long now) with about 100 nodes of mixed Linux/OS stations, few Windows and all NFS.

2D renders are constantly doing IO during a frame render were a 3D render mostly accesses once during a frame, it may fetch more data during that frame like textures etc... but mostly just a single server hit per frame, well actually 2, read then at the end a write.

I was thinking to bump up my mem to 256GB but if arcstat is only showing 11G max, it doesn't sound like money well spent.

My question is not whats going on but rather how I can see better whats going on? I'm having a hard time interpreting numbers resulting from the 4 tools noted above.

I can swap out procs or mem but not both.

I can provide a few pics of whats going on but didn't want to vomit all this info at once. I'll only barf it all up upon any interest in this thread :)