- Joined

- Apr 16, 2020

- Messages

- 2,947

Hi,

I am fairly certain I know what is wrong here / what I am doing wrong, but thought I would post, almost as a sanity check to myself.

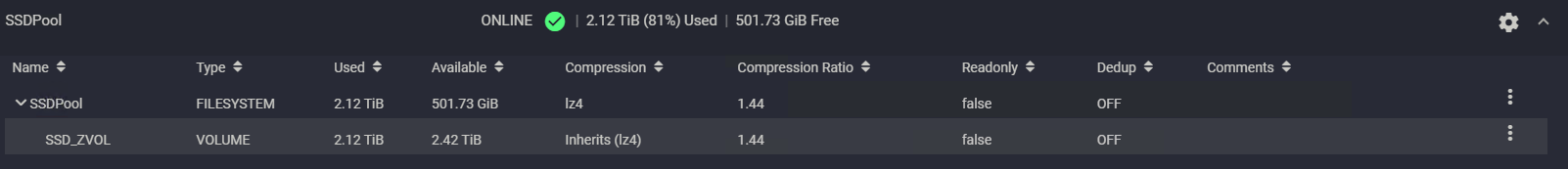

Storage Pool: SSDPool

3 Mirrored VDEVs of Crucial 1TB MX100 SSD's

Log on Optane

Cache on Optane

There is a single ZVOL for iSCSI (VMWare) that uses approximately 80% of the available space on the Pool (with no snapshots)

VMWare shows that I am using 346GB of 2.09TB Capacity which seems about right

I snapshot the Pool (in TrueNAS) on an hourly basis and keep the snapshots for three days which in a steady state works just fine.

I have just migrated to TrueNAS-12.0-U5 which involves migrating all active VM's to what I call "swing storage", aka another iSCSI target on a different NAS, I can then upgrade and then reboot the TrueNAS without the VM's having a hissy fit. I then migrate the VM's back. All good except that shortly afterwards I get an alert saying the snapshot task fails due to lack of disk space.

My understanding:

The process of removing the VM's and then replacing the VM's creates a snapshot scenario of equal size to the VM Size, this coupled with the original snaphot means that my snapshots are taking up a minimum space of 2*Total VM Space (2*346GB=700GB) along with any normal hourly churn. This space MUST be coming from the 501GB space left in the pool which is clearly impossible. The numbers get even worse if I do this more often inside the snapshot lifetime

Solution:

Either add another VDEV to the pool but don't change the size of the ZVOL to give more space (expensive, and given the usage, unnecessary)

Or reduce the size of the ZVOL down to 1TB giving lots of space for the snapshots to live in (cheap)

Am I thinking this through correctly or have I lost the plot and got this wrong?

I am fairly certain I know what is wrong here / what I am doing wrong, but thought I would post, almost as a sanity check to myself.

Storage Pool: SSDPool

3 Mirrored VDEVs of Crucial 1TB MX100 SSD's

Log on Optane

Cache on Optane

There is a single ZVOL for iSCSI (VMWare) that uses approximately 80% of the available space on the Pool (with no snapshots)

VMWare shows that I am using 346GB of 2.09TB Capacity which seems about right

I snapshot the Pool (in TrueNAS) on an hourly basis and keep the snapshots for three days which in a steady state works just fine.

I have just migrated to TrueNAS-12.0-U5 which involves migrating all active VM's to what I call "swing storage", aka another iSCSI target on a different NAS, I can then upgrade and then reboot the TrueNAS without the VM's having a hissy fit. I then migrate the VM's back. All good except that shortly afterwards I get an alert saying the snapshot task fails due to lack of disk space.

My understanding:

The process of removing the VM's and then replacing the VM's creates a snapshot scenario of equal size to the VM Size, this coupled with the original snaphot means that my snapshots are taking up a minimum space of 2*Total VM Space (2*346GB=700GB) along with any normal hourly churn. This space MUST be coming from the 501GB space left in the pool which is clearly impossible. The numbers get even worse if I do this more often inside the snapshot lifetime

Solution:

Either add another VDEV to the pool but don't change the size of the ZVOL to give more space (expensive, and given the usage, unnecessary)

Or reduce the size of the ZVOL down to 1TB giving lots of space for the snapshots to live in (cheap)

Am I thinking this through correctly or have I lost the plot and got this wrong?