WIRLYWIRLY

Cadet

- Joined

- Mar 29, 2015

- Messages

- 4

How's it going. I just setup my first FreeNas maching (Running the latest 9.1) and have been playing with it while importing some NTFS drives. I'm coming from Windows, never used Linux/FreeBSD/Anything other than windows. I know my way around windows cmd so that has helped me when trying to figure out SSH, but it still gets pretty confusing for me until I learn all the terminology. Anyways here is what i've been up to...

All my drives are pretty much full to capacity (1x2tb, 1x3tb, 1x4tb) and the only free HDD is a new one I just bought (4tb). What I am doing is importing the NTFS drives into a dataset on the new 4tb ZFS Volume, Waiting for it to finish, striping the NTFS drive I copied from, then using "Zfs Send | Receive" for copying the dataset back to the original HDD, which is the newly striped volumes. I am doing that for all 3 drives that are NTFS, which I would like to add each as a striped volumes on FreeNas.

So basically when im done I'll have 4 stripes, each one it's own HDD. I know there is no redundancy, but most of these files get deleted within a few months anyways to make room for new stuff. Not worried about losing them, just want to hang on to them until then. But I would like to avoid losing the ENTIRE pool if a HDD fails on stripe. I'd rather lose 1 Volume and all its data instead of all the data on 4 HDD's. Also, Like I said my 3 NTFS drives are full to capacity (9tb total) and all I have free is a 4tb. So I don't have the space to back all the files up while I stripe them.

So here is my question(s)...

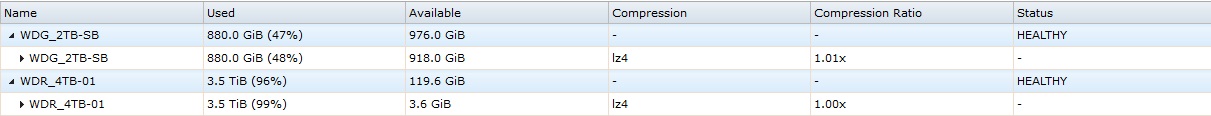

After importing a NTFS drive, I noticed something was weird with the available space that the volume claims vs the available space that the dataset claims. I have attached a picture below...

I tried to find an answer and a user explains why pretty well with one of his posts --> https://forums.freenas.org/index.php?threads/volume-vs-dataset-used-storage.39161/#post-240536

However, unlike the OP in that thread who is using a RAIDZ2, I am using stripe for each Volume. Therefore there should not be any Parity.

Q) Why is the available space on the dataset so much less than what it says on the volume?

P.S

While im here, might as well ask this too...

I have a dataset shared via CIFS (Samba). Under permissions the group "Samba" is the owner. I have also made a user and added them to that group. Guest access is on and I have given Everyone Modify (Read/write/execute/etc) on the Windows security tab. However, when connecting to the share via Windows, I am ALWAYS asked for login credentials. For the user who is a member of the owner group it works fine. I just put in the login credentials and away we go. However, because it always asks for credentials, guest have no access. Any idea what might be causing that? I've looked all over Shares/Permissions/Samba settings and cant find anything that fixes it.

All my drives are pretty much full to capacity (1x2tb, 1x3tb, 1x4tb) and the only free HDD is a new one I just bought (4tb). What I am doing is importing the NTFS drives into a dataset on the new 4tb ZFS Volume, Waiting for it to finish, striping the NTFS drive I copied from, then using "Zfs Send | Receive" for copying the dataset back to the original HDD, which is the newly striped volumes. I am doing that for all 3 drives that are NTFS, which I would like to add each as a striped volumes on FreeNas.

So basically when im done I'll have 4 stripes, each one it's own HDD. I know there is no redundancy, but most of these files get deleted within a few months anyways to make room for new stuff. Not worried about losing them, just want to hang on to them until then. But I would like to avoid losing the ENTIRE pool if a HDD fails on stripe. I'd rather lose 1 Volume and all its data instead of all the data on 4 HDD's. Also, Like I said my 3 NTFS drives are full to capacity (9tb total) and all I have free is a 4tb. So I don't have the space to back all the files up while I stripe them.

So here is my question(s)...

After importing a NTFS drive, I noticed something was weird with the available space that the volume claims vs the available space that the dataset claims. I have attached a picture below...

I tried to find an answer and a user explains why pretty well with one of his posts --> https://forums.freenas.org/index.php?threads/volume-vs-dataset-used-storage.39161/#post-240536

However, unlike the OP in that thread who is using a RAIDZ2, I am using stripe for each Volume. Therefore there should not be any Parity.

Q) Why is the available space on the dataset so much less than what it says on the volume?

P.S

While im here, might as well ask this too...

I have a dataset shared via CIFS (Samba). Under permissions the group "Samba" is the owner. I have also made a user and added them to that group. Guest access is on and I have given Everyone Modify (Read/write/execute/etc) on the Windows security tab. However, when connecting to the share via Windows, I am ALWAYS asked for login credentials. For the user who is a member of the owner group it works fine. I just put in the login credentials and away we go. However, because it always asks for credentials, guest have no access. Any idea what might be causing that? I've looked all over Shares/Permissions/Samba settings and cant find anything that fixes it.

Last edited: