NorthernFrontier

Cadet

- Joined

- Aug 19, 2022

- Messages

- 4

Hey all, I'm new to TrueNas and recently repurposed an old gaming tower as a non critical NAS.

I currently have:

Gigabyte ultra durable GA-970A-DS3P Motherboard

AMD FX-6300

16gb of 2333mhz DDR3

A mash up of 4 1tb drives (Based on what drives I had lying around) they are aranged in a striped configuration. 1 drive per vdev, 4 vdevs in the pool. (I'm completly aware that striped is likely to cause data loss in case of failure, as for the vdev configuration, I honestly have no idea. Could be fine, could cause problems.)

For the network card I'm just running the built in RealTek chip on my Motherboard. 1000Mbit

I'm running TrueNAS-13.0-U1.1 from a usb 2 drive.

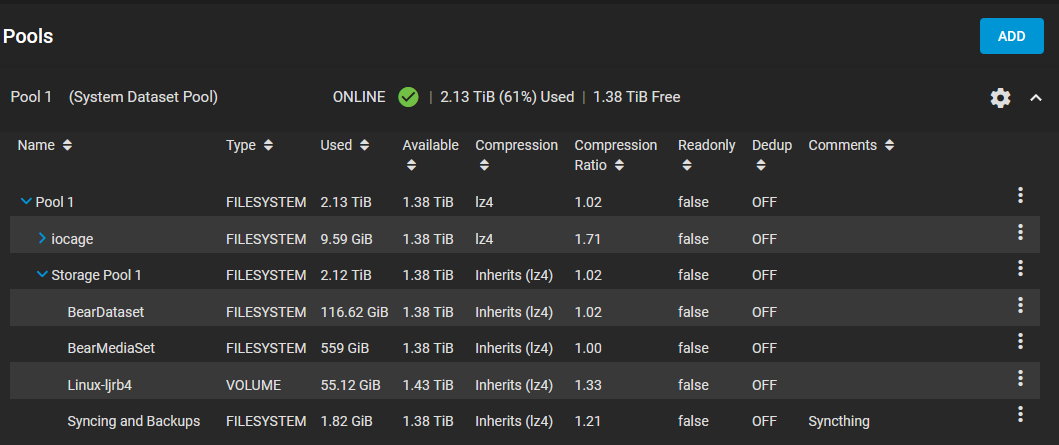

As the title states, I'm having issues regarding the reported size in the GUI. My system tree is looking like this:

I'm noticing that Pool 1 and Storage Pool 1 are reporting over 2 TiB being used, however my actual shared data sets take up far less, about 732.5 GiB. Leaving me with less space then I expected.

So far, I have:

-Checked with FileZilla, WinSCP, and CyberDuck for any possible hidden folders that Windows isn't reporting.

-Checked with Windows File Explorer for any files

-Rebooted my NAS

-Ran zfs list and gotten this back:

I currently have:

Gigabyte ultra durable GA-970A-DS3P Motherboard

AMD FX-6300

16gb of 2333mhz DDR3

A mash up of 4 1tb drives (Based on what drives I had lying around) they are aranged in a striped configuration. 1 drive per vdev, 4 vdevs in the pool. (I'm completly aware that striped is likely to cause data loss in case of failure, as for the vdev configuration, I honestly have no idea. Could be fine, could cause problems.)

For the network card I'm just running the built in RealTek chip on my Motherboard. 1000Mbit

I'm running TrueNAS-13.0-U1.1 from a usb 2 drive.

As the title states, I'm having issues regarding the reported size in the GUI. My system tree is looking like this:

I'm noticing that Pool 1 and Storage Pool 1 are reporting over 2 TiB being used, however my actual shared data sets take up far less, about 732.5 GiB. Leaving me with less space then I expected.

So far, I have:

-Checked with FileZilla, WinSCP, and CyberDuck for any possible hidden folders that Windows isn't reporting.

-Checked with Windows File Explorer for any files

-Rebooted my NAS

-Ran zfs list and gotten this back:

Code:

root@truenas[~]# zfs list NAME USED AVAIL REFER MOUNTPOINT Pool 1 2.13T 1.38T 96K /mnt/Pool 1 Pool 1/.system 44.5M 1.38T 112K legacy Pool 1/.system/configs-81963fc7279b4cf49c43e5a8cbe36cdb 424K 1.38T 360K legacy Pool 1/.system/cores 160K 1024M 96K legacy Pool 1/.system/rrd-81963fc7279b4cf49c43e5a8cbe36cdb 38.7M 1.38T 24.1M legacy Pool 1/.system/samba4 1.70M 1.38T 340K legacy Pool 1/.system/services 96K 1.38T 96K legacy Pool 1/.system/syslog-81963fc7279b4cf49c43e5a8cbe36cdb 3.11M 1.38T 1.76M legacy Pool 1/.system/webui 96K 1.38T 96K legacy Pool 1/Storage Pool 1 2.12T 1.38T 132K /mnt/Pool 1/Storage Pool 1 Pool 1/Storage Pool 1/BearDataset 117G 1.38T 117G /mnt/Pool 1/Storage Pool 1/BearDataset Pool 1/Storage Pool 1/BearMediaSet 559G 1.38T 559G /mnt/Pool 1/Storage Pool 1/BearMediaSet Pool 1/Storage Pool 1/Linux-ljrb4 55.1G 1.43T 4.33G - Pool 1/Storage Pool 1/Syncing and Backups 1.82G 1.38T 1.81G /mnt/Pool 1/Storage Pool 1/Syncing and Backups Pool 1/iocage 9.59G 1.38T 7.83M /mnt/Pool 1/iocage Pool 1/iocage/download 1.03G 1.38T 104K /mnt/Pool 1/iocage/download Pool 1/iocage/download/12.3-RELEASE 403M 1.38T 403M /mnt/Pool 1/iocage/download/12.3-RELEASE Pool 1/iocage/download/13.0-RELEASE 401M 1.38T 401M /mnt/Pool 1/iocage/download/13.0-RELEASE Pool 1/iocage/download/13.1-RELEASE 251M 1.38T 251M /mnt/Pool 1/iocage/download/13.1-RELEASE Pool 1/iocage/images 96K 1.38T 96K /mnt/Pool 1/iocage/images Pool 1/iocage/jails 5.29G 1.38T 120K /mnt/Pool 1/iocage/jails Pool 1/iocage/jails/ADGuardServer 90.4M 1.38T 308K /mnt/Pool 1/iocage/jails/ADGuardServer Pool 1/iocage/jails/ADGuardServer/root 90.1M 1.38T 90.1M /mnt/Pool 1/iocage/jails/ADGuardServer/root Pool 1/iocage/jails/EmbyServer 2.10G 1.38T 300K /mnt/Pool 1/iocage/jails/EmbyServer Pool 1/iocage/jails/EmbyServer/root 2.10G 1.38T 2.10G /mnt/Pool 1/iocage/jails/EmbyServer/root Pool 1/iocage/jails/Guacamole 1.25G 1.38T 328K /mnt/Pool 1/iocage/jails/Guacamole Pool 1/iocage/jails/Guacamole/root 1.25G 1.38T 1.25G /mnt/Pool 1/iocage/jails/Guacamole/root Pool 1/iocage/jails/MineOS 969M 1.38T 360K /mnt/Pool 1/iocage/jails/MineOS Pool 1/iocage/jails/MineOS/root 969M 1.38T 969M /mnt/Pool 1/iocage/jails/MineOS/root Pool 1/iocage/jails/OpenVPN 69.3M 1.38T 340K /mnt/Pool 1/iocage/jails/OpenVPN Pool 1/iocage/jails/OpenVPN/root 69.0M 1.38T 69.0M /mnt/Pool 1/iocage/jails/OpenVPN/root Pool 1/iocage/jails/SyncThing 859M 1.38T 308K /mnt/Pool 1/iocage/jails/SyncThing Pool 1/iocage/jails/SyncThing/root 859M 1.38T 854M /mnt/Pool 1/iocage/jails/SyncThing/root Pool 1/iocage/log 232K 1.38T 152K /mnt/Pool 1/iocage/log Pool 1/iocage/releases 3.26G 1.38T 104K /mnt/Pool 1/iocage/releases Pool 1/iocage/releases/12.3-RELEASE 1.32G 1.38T 96K /mnt/Pool 1/iocage/releases/12.3-RELEASE Pool 1/iocage/releases/12.3-RELEASE/root 1.32G 1.38T 1.32G /mnt/Pool 1/iocage/releases/12.3-RELEASE/root Pool 1/iocage/releases/13.0-RELEASE 1.31G 1.38T 96K /mnt/Pool 1/iocage/releases/13.0-RELEASE Pool 1/iocage/releases/13.0-RELEASE/root 1.31G 1.38T 1.31G /mnt/Pool 1/iocage/releases/13.0-RELEASE/root Pool 1/iocage/releases/13.1-RELEASE 644M 1.38T 96K /mnt/Pool 1/iocage/releases/13.1-RELEASE Pool 1/iocage/releases/13.1-RELEASE/root 644M 1.38T 644M /mnt/Pool 1/iocage/releases/13.1-RELEASE/root Pool 1/iocage/templates 96K 1.38T 96K /mnt/Pool 1/iocage/templates boot-pool 1.29G 26.3G 24K none [/code ] I'm no expert, but I don't see 2.2 TiB worth of information there. If I map a share to windows, I only see the "1.38tib" available. I've read through a few posts on the forums about similar topics, however they were for older versions of TrueNas or even FreeNas. The one that was marked as "Solved" was solved through a Reboot. The others were discussions about possible GUI Bugs, or browser caching. Or just outright too technical for me to understand at the moment.