Hello everyone.

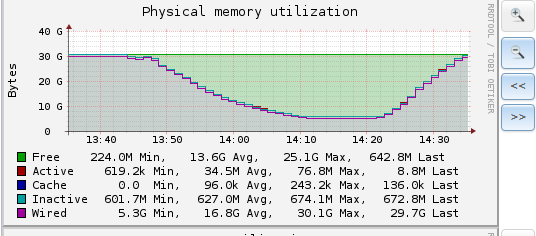

I have a bare metal FreeNAS 9.10.2 U6 presenting 2 zvol volumes to 2 ESXi 6.0 5050593 hosts via iSCSI. The VM's power up fine but when I attempt to vMotion from one of the datastores to another, the destination datastore disconnects from the ESXi host within about 1 minute. It appears that it might be bogging down the FreeNAS box due to the wired memory dropping like a rock before everything times out, then going back up to the expected value. I have tried adding ZIL SSD's to both datastores with the same result.

The FreeNAS stats are below

VMware vobd.log when issue occurs

Example of dmesg outputs when issue occurs

I'm not entirely sure where to go from here. Thank you for any and all help.

UPDATE: I've identified some issues noted below. The same target name was being used for both extents so I will be creating a second target and attempting this again. Worst case scenario, I'll also try upgrading to 11U4. Speaking of that, is 11 officially released now?

I have a bare metal FreeNAS 9.10.2 U6 presenting 2 zvol volumes to 2 ESXi 6.0 5050593 hosts via iSCSI. The VM's power up fine but when I attempt to vMotion from one of the datastores to another, the destination datastore disconnects from the ESXi host within about 1 minute. It appears that it might be bogging down the FreeNAS box due to the wired memory dropping like a rock before everything times out, then going back up to the expected value. I have tried adding ZIL SSD's to both datastores with the same result.

The FreeNAS stats are below

Code:

SuperMicro 2028U-TRTP+ 2x E5-2620v3 32GB DDR4 ECC RAM Volume 1: RAIDZ2 6x Seagate SATA 7.2k 2.5" Volume 2: RAIDZ2 6x Seagate SAS 7.2k 2.5" Intel 82599ES 10-Gigabit SFP+ NIC LSI SAS 9305-24I

VMware vobd.log when issue occurs

Code:

2017-12-04T22:21:35.895Z: [vmfsCorrelator] 16119849733us: [esx.problem.vmfs.heartbeat.timedout] 5a20c4bd-fce101d7-5e83-0cc47ab58e90 SharedStorage_SAS

Example of dmesg outputs when issue occurs

Code:

WARNING: 192.1.11.222 (iqn.1998-01.com.vmware:vmhost2-24375a89): no ping reply (NOP-Out) after 5 seconds; dropping connection ctl_datamove: tag 0xac618 on (0:3:1) aborted ctl_datamove: tag 0xac61c on (0:3:1) aborted ctl_datamove: tag 0xac61d on (0:3:1) aborted (0:1:0/0): WRITE(10). CDB: 2a 00 73 ee 60 00 00 08 00 00 (0:1:0/0): WRITE(16). CDB: 8a 00 00 00 00 02 b5 4f 70 00 00 00 08 00 00 00 (0:1:0/0): WRITE(16). CDB: 8a 00 00 00 00 02 b5 4f a8 00 00 00 08 00 00 00 (0:1:0/0): WRITE(16). CDB: 8a 00 00 00 00 02 c1 f3 d0 00 00 00 08 00 00 00 (0:1:0/0): Tag: 0x11f3ac, type 1 (0:1:0/0): Tag: 0x11f3ad, type 1 (0:1:0/0): ctl_datamove: 349 seconds (0:1:0/0): ctl_datamove: 349 seconds (0:1:0/0): Tag: 0x11f389, type 1 (0:1:0/0): Tag: 0x11f3ae, type 1 (0:1:0/0): ctl_process_done: 350 seconds (0:1:0/0): ctl_datamove: 349 seconds (0:1:0/0): WRITE(10). CDB: 2a 00 72 dc 18 00 00 08 00 00 (0:1:0/0): Tag: 0x11f374, type 1 (0:1:0/0): WRITE(10). CDB: 2a 00 72 dc 80 00 00 08 00 00 (0:1:0/0): ctl_process_done: 350 seconds (0:1:0/0): Tag: 0x11f3af, type 1 (0:1:0/0): WRITE(16). CDB: 8a 00 00 00 00 02 b5 4f b0 00 00 00 08 00 00 00 (0:1:0/0): Tag: 0x11f3b0, type 1 (0:1:0/0): ctl_datamove: 349 seconds (0:1:0/0): ctl_datamove: 349 seconds

I'm not entirely sure where to go from here. Thank you for any and all help.

UPDATE: I've identified some issues noted below. The same target name was being used for both extents so I will be creating a second target and attempting this again. Worst case scenario, I'll also try upgrading to 11U4. Speaking of that, is 11 officially released now?

Last edited: