Hallo Freenas Forum,

first of all - thanks to the developers for this cool software and thanks to this forum that helped learning the basics and details of FreeNAS.

It's a bit LONG story. You can also go down to "HERE" were the concrete question is asked.

I came to FreeNAS about 6 months agao as I was really unhappy with our IT installation.

About 4 years ago we have bought a bundle of 2 ESXi Servers (in meanwhile 3) and a Netgear ReadyNAS 4200V1 (Raid-5) as storage (everything connected via 10 Gig/s) from our IT company, who also built up and configured everything. We have about 15 Windows VMs and 5 Linux VMs. Most of them don't have high load.

About 30 users work on 3 Terminal-Servers (Windows 2008 R2, we are currentcly changing to Windows 2012).

From my point of view the IT company did a quite poor job. They needed many months to build up everything etc.. Since then we had performance issues, foremost the users working on the Terminalservers complained about "hanging" Desktops. The IT company tried a lot to ease the problems, I even bought some quite useless new hardware (faster 10K harddrives in Raid-1, new Switches etc.) but never found a solution, which our users were really happy with.

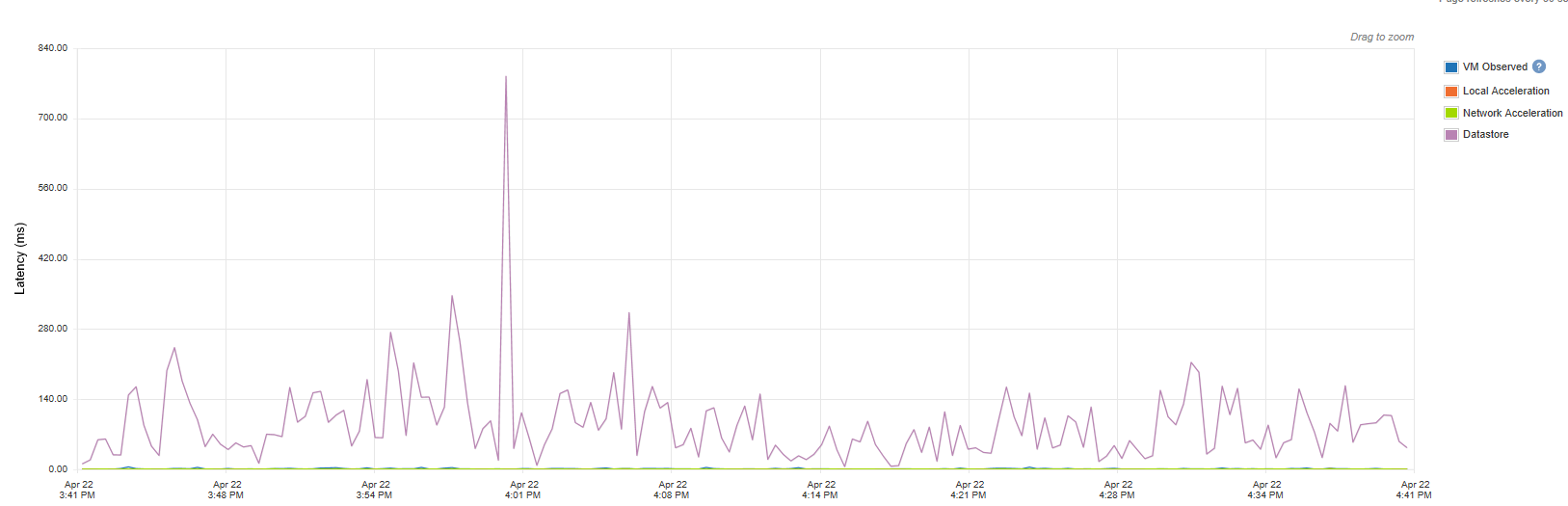

I'm quite sure for a long time, that the ReadyNAS 4200 is crap for our use case. In the attached file you'll see the latency of one of our Terminalservers on the ReadyNAS 4200. As avg. latencies of > 100ms are really bad the main problem seems to be the peaks. In this image you'll see it goes up to 800ms, but I have seen > 2.000ms quite often.

However, last summer we bought PernixData, which is a local SSD write and read cache on the host side.

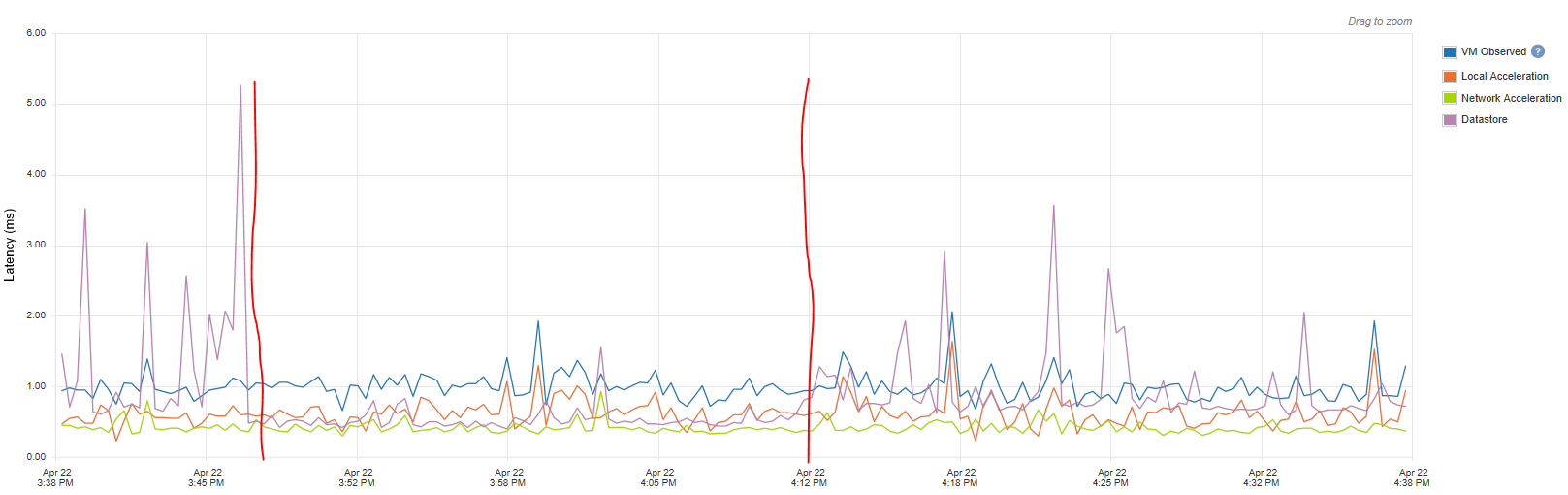

The attached images are from the performance tab of PernixData. You see the "VM observed" is about 1-3 ms

This helped to ease the problems a bit, but they didn't get away. I think the "hanging" Desktops still happen, if the requested data are not on the local SSD cache and the ReadyNAS needs to answer too long (i.e. 500ms and more).

As the christmas season 2015 went near - were we have high volume business - I searched for a solution on my own - and I came along FreeNAS.

I took our old Thomas Krenn server from 2009 (Xeon 3220 2,4 Ghz with 8 GB ECC RAM) and first bought a 850 Pro SSD (consumer version) as I didn't know if this works at all and would bring improvements to our existing ReadyNAS solution.

However after some minor problems I tested it with one of our Terminalserver VMs and the results were great. Latencies have been very low (~ 1 ms) and users on that machine stopped complaining.

I decied to work with this solution during christmas time, so our users could work without being enerved by hanging and slow IT.

To have a redunandt solution I took another old server (almost the same Thomas Krenn Xeon 3210 2,13 Ghz 8 GB ECC RAM) and bought 2x Samsung SSD 845DC EVO and set up another FreeNAS, this time with mirror-1 and zvol as device extent via iSCSI.

Everything worked great during christmas and I was really happy with this "solution".

I just moved the Terminalservers to the 2 FreeNAS servers. The Fileservers and other Severs with Databases etc. stayed on the ReadyNAS 4200. I thought - in worst case - if something goes wrong I can build up the Terminalserver from last backup. The user data are transfered to the fileserver every time they log out, so I did see small risks of a big data loss.

But nothing went wrong.

Thank you for your patience. I jus wanted to give you a proper image about my situation and how I did come to it.

HERE starts the real question.

I realized I installed the first server with the 1x Samsung 850 Pro SSD in iSCSI device mode without using the ZFS file system (no zvol) at all. Yesterday I changed this. I also added another 850 Pro SSD and recreated the storage with a zvol which is used as iSCSI device extent and reconnected it to my small ESXi cluster. Furthermore I changed the update trail from 9.3 to 9.10 as that is marked as stable now.

I now have two very similar machines:

Freenas 1: 2x 850 Pro SSD on Xeon 3220 2,4 Ghz, 8 GB ECC connected via 1 Gbit/s, no ZIL write cache, no L2ARC read cache, FreeNAS 9.10

Freenas 2: 2x 845DC EVO on Xeon 3210 2,13 Ghz, 8 GB ECC connected via 1 Gbit/s, no ZIL write cache, no L2ARC read cache, FreeNAS 9.3

I have read in the forums that sync=always is highly recommended, especially in iSCSI block devices used in ESXi, so I change to this mode for the SSD zvol on the Freenas 2 server some months ago.

In the attached image you'll se the differences in latency.

With sync=standard (between the red bars) the latency is < 1ms --> RAM latency). But also with sync=always I have quite stable and low latencys which are < 5 ms all the time.

This is the server with 2x Samsung 845DC EVO.

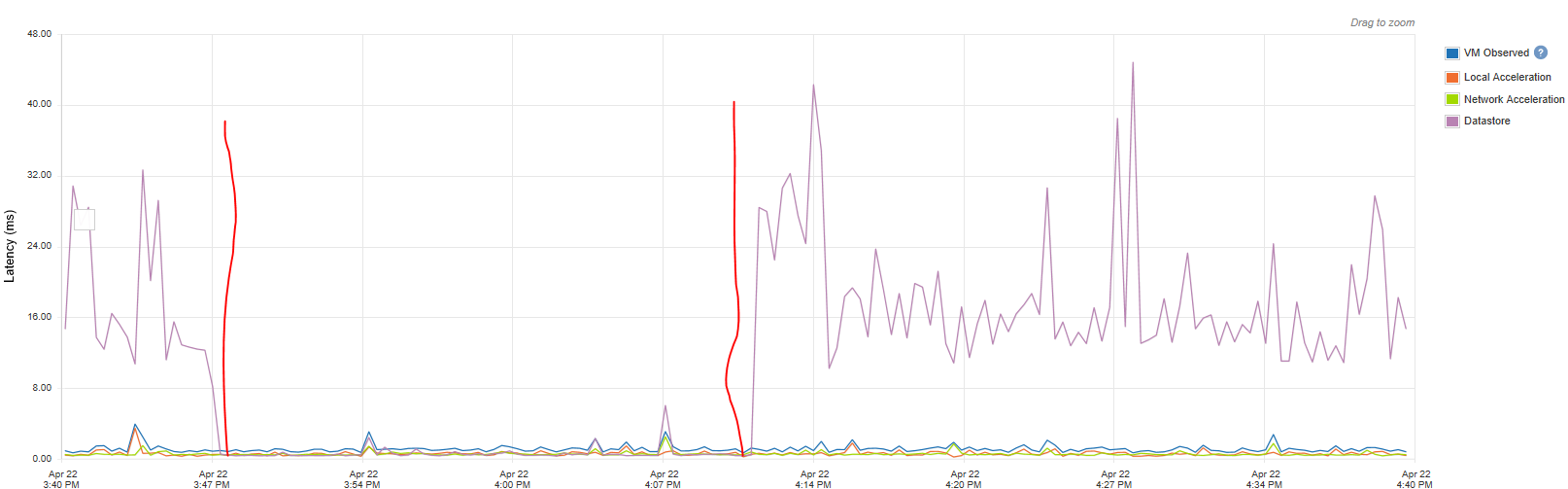

The next image is from Freenas 1.

2x Samsung 850 Pro

Between the bars -> sync=standard, no latency of course.

After setting sync=always I expected more or less the same latencies s with Freenas 2.

But you see they are much higher latencies betwen 10-30ms. Of course this is way better than I ever had with the ReadyNAS (which doesn't support SSDs, so I couldn't test it there).

But for a SSD server the latency is quite poor.

The rest of the hardware is almost the same, so why these big differences?

Thanks for everyone who made it up till here and thank you for any answer.

One word to the 850 Pro.

I know this is a consumer version and shouldn't be used in a NAS, altough the TBW is quite high. I know it has no Power loss protection. I have attached an Eaton UPS to both Freenas servers and configured them to shut down on time in case of power loss.

Furthermore - as explained above - I accept a little risk of data loss as I "just" run the Terminalserver the.

Right now I'm asking myself how to go on.

Of course this setup is probably not the final one. The servers take ~ 130 Watt each and altough latency is for me much more important than raw performance I'd like to have a solution with 10 Gbit/s in the end.

I considered buying 1 or even 2 (redundancy) FreeNAS Mini XL or also a FreeNAS certified system from iXSystems.

My IT company complains about FreeNAS not being marked as "VMware ready". However, if this crappy Netgear ReadyNAS is certified but has not useable latencies this "VMware ready" doesn't say much for real life.

Of course I also could buy a TrueNAS from iXSystems, but that is a lot of money.

Or I build up my own FreeNAS server(s).

Or I listen to my IT company and buy a new version of something like ReadyNAS, Qnap etc.?

What do you think? I'm happy for every opinion about this issue.

Greetings

first of all - thanks to the developers for this cool software and thanks to this forum that helped learning the basics and details of FreeNAS.

It's a bit LONG story. You can also go down to "HERE" were the concrete question is asked.

I came to FreeNAS about 6 months agao as I was really unhappy with our IT installation.

About 4 years ago we have bought a bundle of 2 ESXi Servers (in meanwhile 3) and a Netgear ReadyNAS 4200V1 (Raid-5) as storage (everything connected via 10 Gig/s) from our IT company, who also built up and configured everything. We have about 15 Windows VMs and 5 Linux VMs. Most of them don't have high load.

About 30 users work on 3 Terminal-Servers (Windows 2008 R2, we are currentcly changing to Windows 2012).

From my point of view the IT company did a quite poor job. They needed many months to build up everything etc.. Since then we had performance issues, foremost the users working on the Terminalservers complained about "hanging" Desktops. The IT company tried a lot to ease the problems, I even bought some quite useless new hardware (faster 10K harddrives in Raid-1, new Switches etc.) but never found a solution, which our users were really happy with.

I'm quite sure for a long time, that the ReadyNAS 4200 is crap for our use case. In the attached file you'll see the latency of one of our Terminalservers on the ReadyNAS 4200. As avg. latencies of > 100ms are really bad the main problem seems to be the peaks. In this image you'll see it goes up to 800ms, but I have seen > 2.000ms quite often.

However, last summer we bought PernixData, which is a local SSD write and read cache on the host side.

The attached images are from the performance tab of PernixData. You see the "VM observed" is about 1-3 ms

This helped to ease the problems a bit, but they didn't get away. I think the "hanging" Desktops still happen, if the requested data are not on the local SSD cache and the ReadyNAS needs to answer too long (i.e. 500ms and more).

As the christmas season 2015 went near - were we have high volume business - I searched for a solution on my own - and I came along FreeNAS.

I took our old Thomas Krenn server from 2009 (Xeon 3220 2,4 Ghz with 8 GB ECC RAM) and first bought a 850 Pro SSD (consumer version) as I didn't know if this works at all and would bring improvements to our existing ReadyNAS solution.

However after some minor problems I tested it with one of our Terminalserver VMs and the results were great. Latencies have been very low (~ 1 ms) and users on that machine stopped complaining.

I decied to work with this solution during christmas time, so our users could work without being enerved by hanging and slow IT.

To have a redunandt solution I took another old server (almost the same Thomas Krenn Xeon 3210 2,13 Ghz 8 GB ECC RAM) and bought 2x Samsung SSD 845DC EVO and set up another FreeNAS, this time with mirror-1 and zvol as device extent via iSCSI.

Everything worked great during christmas and I was really happy with this "solution".

I just moved the Terminalservers to the 2 FreeNAS servers. The Fileservers and other Severs with Databases etc. stayed on the ReadyNAS 4200. I thought - in worst case - if something goes wrong I can build up the Terminalserver from last backup. The user data are transfered to the fileserver every time they log out, so I did see small risks of a big data loss.

But nothing went wrong.

Thank you for your patience. I jus wanted to give you a proper image about my situation and how I did come to it.

HERE starts the real question.

I realized I installed the first server with the 1x Samsung 850 Pro SSD in iSCSI device mode without using the ZFS file system (no zvol) at all. Yesterday I changed this. I also added another 850 Pro SSD and recreated the storage with a zvol which is used as iSCSI device extent and reconnected it to my small ESXi cluster. Furthermore I changed the update trail from 9.3 to 9.10 as that is marked as stable now.

I now have two very similar machines:

Freenas 1: 2x 850 Pro SSD on Xeon 3220 2,4 Ghz, 8 GB ECC connected via 1 Gbit/s, no ZIL write cache, no L2ARC read cache, FreeNAS 9.10

Freenas 2: 2x 845DC EVO on Xeon 3210 2,13 Ghz, 8 GB ECC connected via 1 Gbit/s, no ZIL write cache, no L2ARC read cache, FreeNAS 9.3

I have read in the forums that sync=always is highly recommended, especially in iSCSI block devices used in ESXi, so I change to this mode for the SSD zvol on the Freenas 2 server some months ago.

In the attached image you'll se the differences in latency.

With sync=standard (between the red bars) the latency is < 1ms --> RAM latency). But also with sync=always I have quite stable and low latencys which are < 5 ms all the time.

This is the server with 2x Samsung 845DC EVO.

The next image is from Freenas 1.

2x Samsung 850 Pro

Between the bars -> sync=standard, no latency of course.

After setting sync=always I expected more or less the same latencies s with Freenas 2.

But you see they are much higher latencies betwen 10-30ms. Of course this is way better than I ever had with the ReadyNAS (which doesn't support SSDs, so I couldn't test it there).

But for a SSD server the latency is quite poor.

The rest of the hardware is almost the same, so why these big differences?

Thanks for everyone who made it up till here and thank you for any answer.

One word to the 850 Pro.

I know this is a consumer version and shouldn't be used in a NAS, altough the TBW is quite high. I know it has no Power loss protection. I have attached an Eaton UPS to both Freenas servers and configured them to shut down on time in case of power loss.

Furthermore - as explained above - I accept a little risk of data loss as I "just" run the Terminalserver the.

Right now I'm asking myself how to go on.

Of course this setup is probably not the final one. The servers take ~ 130 Watt each and altough latency is for me much more important than raw performance I'd like to have a solution with 10 Gbit/s in the end.

I considered buying 1 or even 2 (redundancy) FreeNAS Mini XL or also a FreeNAS certified system from iXSystems.

My IT company complains about FreeNAS not being marked as "VMware ready". However, if this crappy Netgear ReadyNAS is certified but has not useable latencies this "VMware ready" doesn't say much for real life.

Of course I also could buy a TrueNAS from iXSystems, but that is a lot of money.

Or I build up my own FreeNAS server(s).

Or I listen to my IT company and buy a new version of something like ReadyNAS, Qnap etc.?

What do you think? I'm happy for every opinion about this issue.

Greetings