- Joined

- Dec 11, 2015

- Messages

- 1,410

Hello Dear Forum,

I'm expanding my homelab.

I'm looking to increase my current RaidZ1, 3x500GB SSD worth of capacity for VM storage.

I've gathered (well, scavenged from other systems... ) two additional 500GB SSDs. I'm willing to pay for an additional up to 2 more units. Total: 7 units to play with.

During this revamp, I will also refit this pool with a PLP SSD as LOG device.

Options I'm considering:

- Add another vdev, 2 vdev (3xSSD, RaidZ1) + SLOG

- Remake pool to 1 vdev(7xSSD RaidZ2). + SLOG

- 3x mirrors. + SLOG

Questions;

1) Is there a significant difference in CPU load between the above setups?

2) Granted all SSDs provide enough IOPS, does it matter as much to choose mirrors over RaidZ(x) for VM storage as the traditional HDD advice?

Currently I see no negative performance impacts from a storage point of view, with the small exception of very high CPU use during installations of certain VMs using CentOS-stream dvd located on the TrueNAS box. The VM is 'living' on another physical box with separate CPU. It is the storage part that taxes the TrueNAS CPU. I suspect this is during the 'read all info from the DVD and check data part'. I might dig into that further somehow.

This got me thinking, that it may become a problem if my 'swarm' of VMs double or triple in numbers "soon" and I choose a known very poor pool design.

The hardware list; i3-6100, X11SSL, LSI 9201-16i. 16GB RAM. TrueNAS 12.0-U1

Cheers,

Edit:

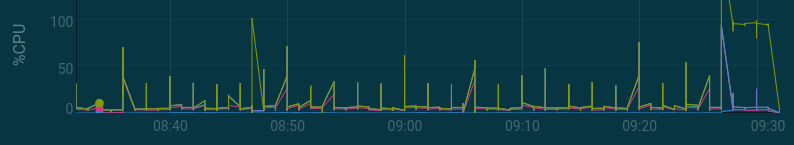

Those 65% load is basically fulload. I give TN access to 3 vcpus (3 threads on 2 cores) and also set cputype to host, which potentially causes TN to show 65% in top, but 100% in GUI.

I made a effort in benchmarking to show the current load of the system:

Writing;

Reading:

I'm expanding my homelab.

I'm looking to increase my current RaidZ1, 3x500GB SSD worth of capacity for VM storage.

I've gathered (well, scavenged from other systems... ) two additional 500GB SSDs. I'm willing to pay for an additional up to 2 more units. Total: 7 units to play with.

During this revamp, I will also refit this pool with a PLP SSD as LOG device.

Options I'm considering:

- Add another vdev, 2 vdev (3xSSD, RaidZ1) + SLOG

- Remake pool to 1 vdev(7xSSD RaidZ2). + SLOG

- 3x mirrors. + SLOG

Questions;

1) Is there a significant difference in CPU load between the above setups?

2) Granted all SSDs provide enough IOPS, does it matter as much to choose mirrors over RaidZ(x) for VM storage as the traditional HDD advice?

Currently I see no negative performance impacts from a storage point of view, with the small exception of very high CPU use during installations of certain VMs using CentOS-stream dvd located on the TrueNAS box. The VM is 'living' on another physical box with separate CPU. It is the storage part that taxes the TrueNAS CPU. I suspect this is during the 'read all info from the DVD and check data part'. I might dig into that further somehow.

This got me thinking, that it may become a problem if my 'swarm' of VMs double or triple in numbers "soon" and I choose a known very poor pool design.

The hardware list; i3-6100, X11SSL, LSI 9201-16i. 16GB RAM. TrueNAS 12.0-U1

Cheers,

Edit:

Those 65% load is basically fulload. I give TN access to 3 vcpus (3 threads on 2 cores) and also set cputype to host, which potentially causes TN to show 65% in top, but 100% in GUI.

I made a effort in benchmarking to show the current load of the system:

Writing;

Code:

capacity operations bandwidth pool alloc free read write read write ---------- ----- ----- ----- ----- ----- ----- tank 517G 867G 0 1.49K 0 21.0M tank 517G 867G 0 1.37K 0 18.0M tank 517G 867G 0 1.38K 0 18.5M tank 517G 867G 0 1.57K 0 20.5M tank 517G 867G 0 1.33K 0 18.6M tank 517G 867G 0 1.48K 0 21.0M

Code:

last pid: 14678; load averages: 3.54, 2.87, 1.68 up 5+12:40:29 08:30:22

88 processes: 2 running, 86 sleeping

CPU: 0.3% user, 0.0% nice, 67.3% system, 0.3% interrupt, 32.2% idle

Mem: 324M Active, 1911M Inact, 12G Wired, 1001M Free

ARC: 9904M Total, 8601M MFU, 425M MRU, 588M Anon, 65M Header, 222M Other

8665M Compressed, 11G Uncompressed, 1.35:1 Ratio Code:

dd if=/dev/zero of=file bs=1048576 1376574767104 bytes transferred in 450.190785 secs (3 057 758 650 bytes/sec)

Reading:

Code:

last pid: 14946; load averages: 0.32, 0.89, 1.15 up 5+12:51:05 08:40:58

87 processes: 1 running, 86 sleeping

CPU: 0.0% user, 0.0% nice, 1.6% system, 0.0% interrupt, 98.4% idle

Mem: 327M Active, 1909M Inact, 12G Wired, 978M Free

ARC: 9331M Total, 8620M MFU, 420M MRU, 2856K Anon, 65M Header, 221M Other

8373M Compressed, 11G Uncompressed, 1.36:1 Ratio

Swap: 2048M Total, 2048M Free Code:

157.496712 secs (8 740 346 395 bytes/sec)

Last edited: