electricbug

Cadet

- Joined

- Jul 20, 2018

- Messages

- 2

Dear all,

I am just joining the trend of building a home lab running on VMware esxi hypervisor. But I am very new to this so please bear with me if my questions seems silly.

My current setup is:

CPU: Intel E3-1220V3

MB: ASUS p9d-MH/10G-dual (with native BCM57840 10Gbe and Intel I210 Gbe controller)

RAM: 32GB ECC UDIMM

NIC: a separate PCIe ASUS BCM57840 10Gbe card

HBA: LSI 9207-8i

Graphics card: On board a 32MB VGA adapter and a PCIe AMD RX550 2G

Storage:

16GB USB key for ESXi installation

256GB 840pro SSD for VMFS datastore

More HDDs to attach on HBA

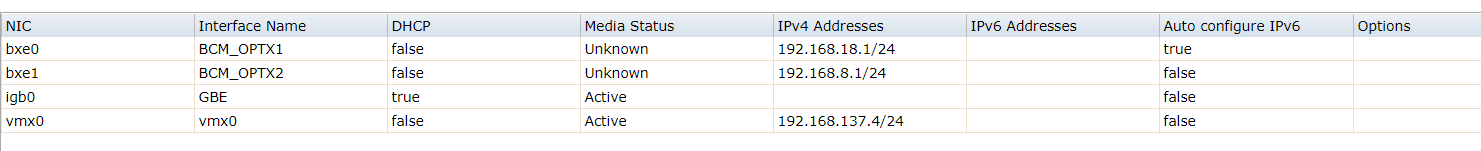

So I managed to install ESXi on the USB key, create a datastore on the SSD and create two VMs on the datastore. One is Windows10 64bit and another is FreeNAS, both installed flawlessly. I tested PCIe passthrough by passing RX550 to the Windows VM and running some rendering tests. I also tested 1Gbe network passthrough by passing the Intel Gbe NIC to Freenas and managed to ping through. So far so good.

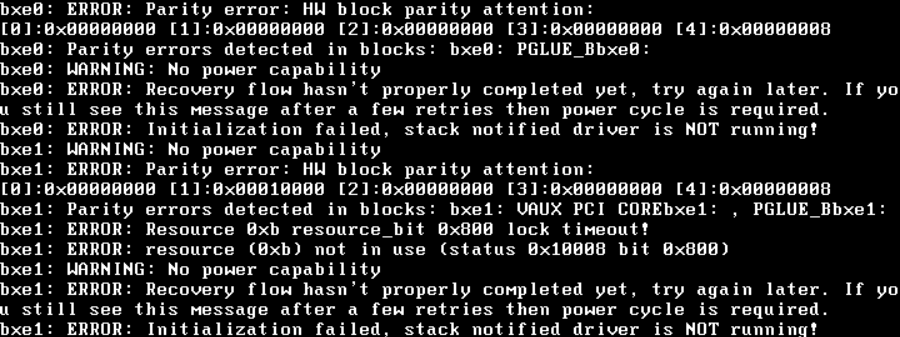

Now when I tried to duplicate the success of the previous tests on the native Broadcom 10Gbe NICs, they refused to cooperate. During each boot-up, Freenas console will pause on the NICs' errors and then dozens of seconds later it will carry on by itself. Same error will appear when I tried to config the network interfaces.

Then I went on to test my luck, so I passed the separate ASUS PCIe NIC card to the windows VM and the driver is automatically installed. I then connect the PCIe card and the native NIC on the MB by SPF cables and on the Windows VM I can see the cable is inserted but no traffic. On the Freenas VM side nothing changed

The BCM NICs doesn't seem to be correctly driven.

At this moment I have zero idea how to debug this problem... Can anybody share some wisdom?

Thanks in advance!

I am just joining the trend of building a home lab running on VMware esxi hypervisor. But I am very new to this so please bear with me if my questions seems silly.

My current setup is:

CPU: Intel E3-1220V3

MB: ASUS p9d-MH/10G-dual (with native BCM57840 10Gbe and Intel I210 Gbe controller)

RAM: 32GB ECC UDIMM

NIC: a separate PCIe ASUS BCM57840 10Gbe card

HBA: LSI 9207-8i

Graphics card: On board a 32MB VGA adapter and a PCIe AMD RX550 2G

Storage:

16GB USB key for ESXi installation

256GB 840pro SSD for VMFS datastore

More HDDs to attach on HBA

So I managed to install ESXi on the USB key, create a datastore on the SSD and create two VMs on the datastore. One is Windows10 64bit and another is FreeNAS, both installed flawlessly. I tested PCIe passthrough by passing RX550 to the Windows VM and running some rendering tests. I also tested 1Gbe network passthrough by passing the Intel Gbe NIC to Freenas and managed to ping through. So far so good.

Now when I tried to duplicate the success of the previous tests on the native Broadcom 10Gbe NICs, they refused to cooperate. During each boot-up, Freenas console will pause on the NICs' errors and then dozens of seconds later it will carry on by itself. Same error will appear when I tried to config the network interfaces.

Then I went on to test my luck, so I passed the separate ASUS PCIe NIC card to the windows VM and the driver is automatically installed. I then connect the PCIe card and the native NIC on the MB by SPF cables and on the Windows VM I can see the cable is inserted but no traffic. On the Freenas VM side nothing changed

The BCM NICs doesn't seem to be correctly driven.

At this moment I have zero idea how to debug this problem... Can anybody share some wisdom?

Thanks in advance!